At Gruntwork, we write lots of automated tests for the code in our Infrastructure as Code Library. Most of these are integration tests that deploy our code into a real AWS account, verify the code works as expected, and then clean up after themselves. Sometimes, the cleanup doesn’t work when the tests run into errors or hit timeout limits.

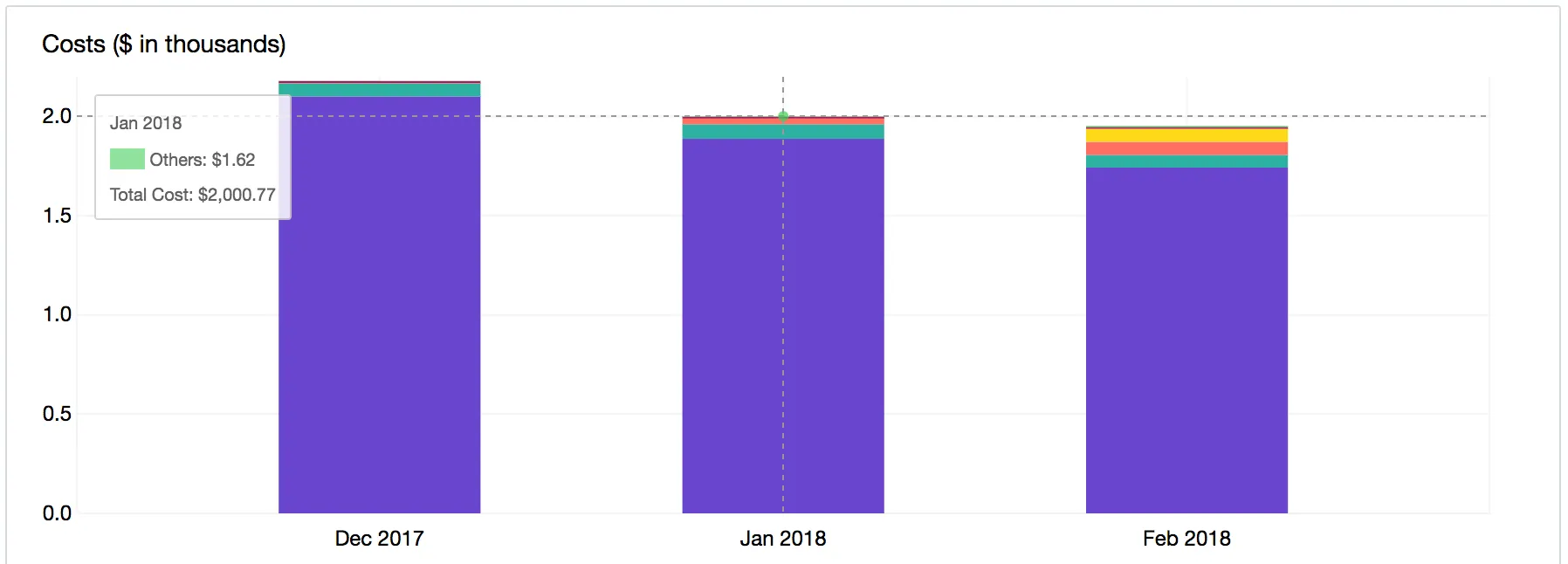

In the early days, these leftover idle resources didn’t matter much. But by the start of 2018, our AWS bill was up to $2000/month. For a company of just 3 people (at the time), and no cloud-hosted product, this was outrageously expensive.

Cutting costs

To solve this, we set out to build a tool that periodically goes through our AWS account and deletes all idle resources. We started out by poring through the AWS Billing Dashboard to identify the resources costing us the most money and then built out the tool to support deleting those resources. We had to be sure that we didn’t actively delete any resources while a test was running, so we designed the tool so it could delete only resources older than a specified time period (e.g. older than 24h).

We call this tool cloud-nuke. It’s an open source, cross-platform CLI application released under the Apache 2.0 License. Here’s an example of how you can use cloud-nuke to destroy all the (supported) resources in your AWS account that are more than 1 hour old:

This will delete all Auto Scaling Groups, (unprotected) EC2 Instances, Launch Configurations, Load Balancers, EBS Volumes, AMIs, Snapshots and Elastic IPs (more resources coming soon!). There’s also an extra option to exclude resources in certain regions:

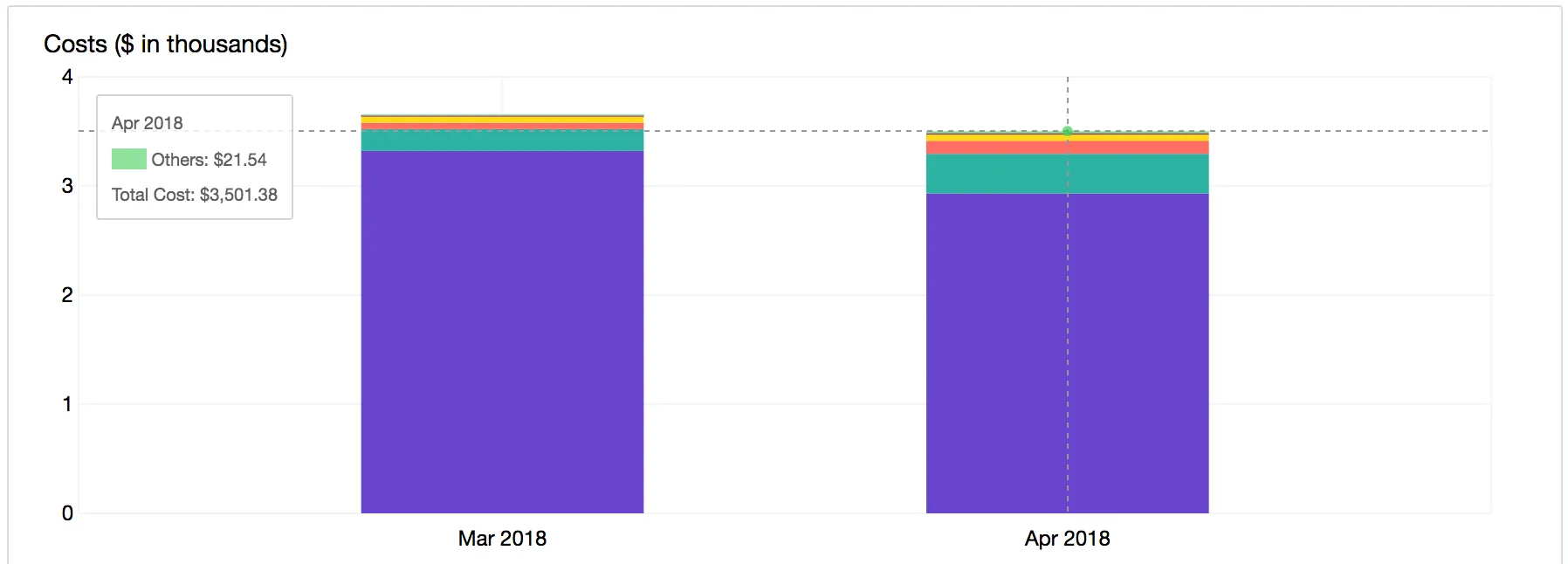

We ran cloud-nuke on a nightly basis and after a few weeks of usage, in March, we saw that our bill had increased by 75% — whoops! After some digging into why, we figured out that there were two reasons: first, the team had grown to 5 people. Second, we realized that timing played an important role.

After further analyzing our spending, we realized that our testing fell into two categories:

- Automated tests, which always took less than an hour.

- Manual tests, where we sometimes kept using resources for an entire day.

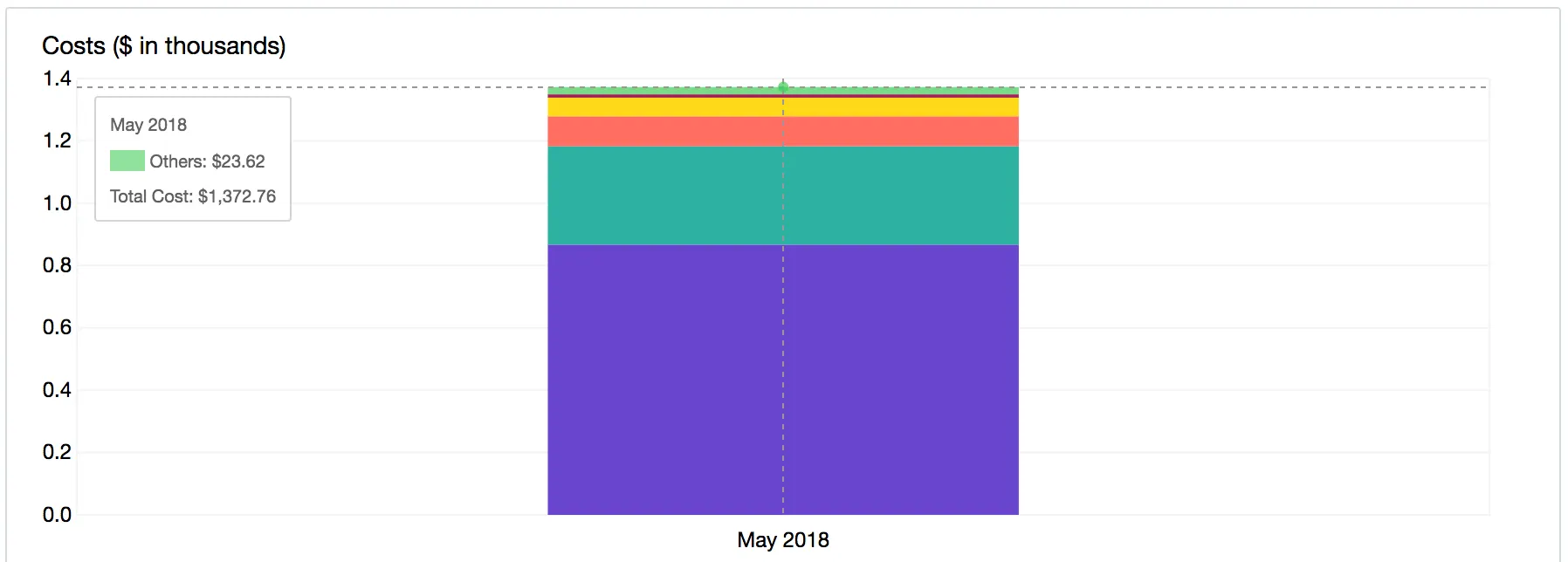

Since we were running cloud-nuke on a nightly basis, that meant that all the automated tests could leave resources hanging around and costing us money for as long as 23 hours before being deleted. But how to delete those resources without affecting users doing manual testing? We fixed this by creating separate AWS accounts for manual testing and automated testing. We then run cloud-nuke every 3 hours in the automated testing account and every 24 hours in the manual testing account. This cut our monthly spending in half.

No bark….a thousand bites

We were excited at the progress and wanted to see just how far we could push it. We noticed that while the spending on compute resources like EC2 instances had greatly reduced, our monthly CloudWatch requests spend was close to $500. This was particularly surprising because none of our tests made a large number of requests to the CloudWatch API.

What followed was a few days of searching through CloudTrail logs to figure out the source of the requests. After a while, we reached out to AWS support and they pointed us to an IAM role belonging to DataDog. Apparently, for DataDog to perform its primary monitoring functions it needed to make a lot of requests to the CloudWatch API — which it did very quietly. Since the integration was for a previous experiment that was no longer being actively pursued, we cut it out.

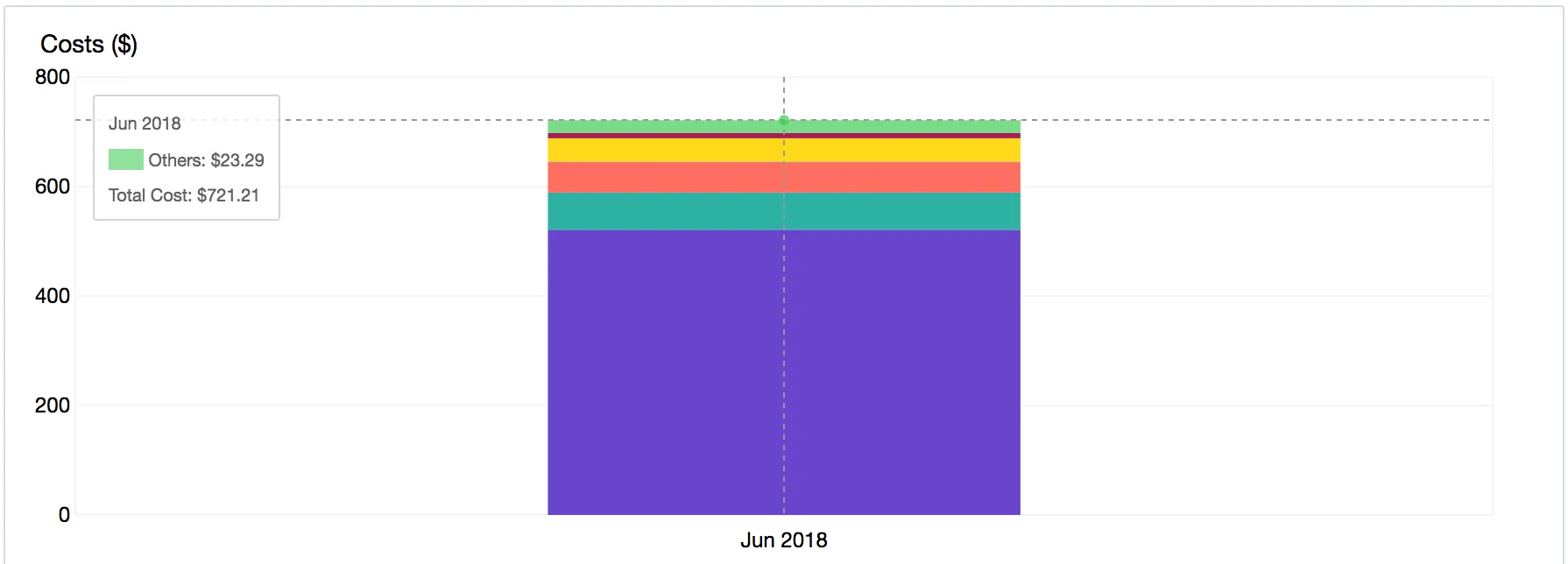

For the first time, in June, we got a bill that was less than $1000 and we’ve been spending only $500 on average since then.

The road ahead

There are a few main takeaways for how to reduce the cost of your testing environments:

- Run cloud-nuke on a scheduled basis.

- Use different accounts depending on usage frequency, with different cloud-nuke schedules.

- Learn to scan your bill and leverage AWS support to track down weird outliers like DataDog.

We’re constantly looking for more ways to reduce cost as the products we build continue to evolve. In the meantime, cloud-nuke is open source and available to be used in your own cloud environment. Here are some features we’d love to add in the future (PRs are welcome!):

- Filter resources by tags

- Support for nuking more resources

- Support for GCP and Azure

Take it for a spin and let us know what you think!

Get your DevOps superpowers at Gruntwork.io.

- No-nonsense DevOps insights

- Expert guidance

- Latest trends on IaC, automation, and DevOps

- Real-world best practices