Once a month, we send out a newsletter to all Gruntwork customers that describes all the updates we’ve made in the last month, news in the DevOps industry, and important security updates. Note that many of the links below go to private repos in the Gruntwork Infrastructure as Code Library and Reference Architecture that are only accessible to customers.

Hello Grunts,

In the last couple months, we been busy with a number of upgrades for the Gruntwork Infrastructure as Code Library: we’ve updated the entire library to be compatible with version 3.x of the AWS Provider for Terraform, updated all but a handful of repos to work with version 0.13.x of Terraform, and began a project to update our Gruntwork Compliance offering to be compatible with the CIS AWS Foundations Benchmark v1.3. We’ve also released a new Commercial Support offering for Terragrunt and Terratest, created a new module that makes it easier to manage IAM to RBAC mapping in EKS, added a new module for creating and managing private S3 buckets, released a blog post on how to protect your GitHub identity, and made a huge number of other bug fixes and improvements. Finally, we’ll be taking a holiday break for two weeks in December as usual, so please plan accordingly!

As always, if you have any questions or need help, email us at support@gruntwork.io!

Gruntwork Updates

AWS Provider 3.x upgrade

Motivation: A few months ago, AWS released version 3.0.0 of the AWS Provider for Terraform. It brought lots of new features, but also included a large number of backwards incompatible changes that made upgrading difficult.

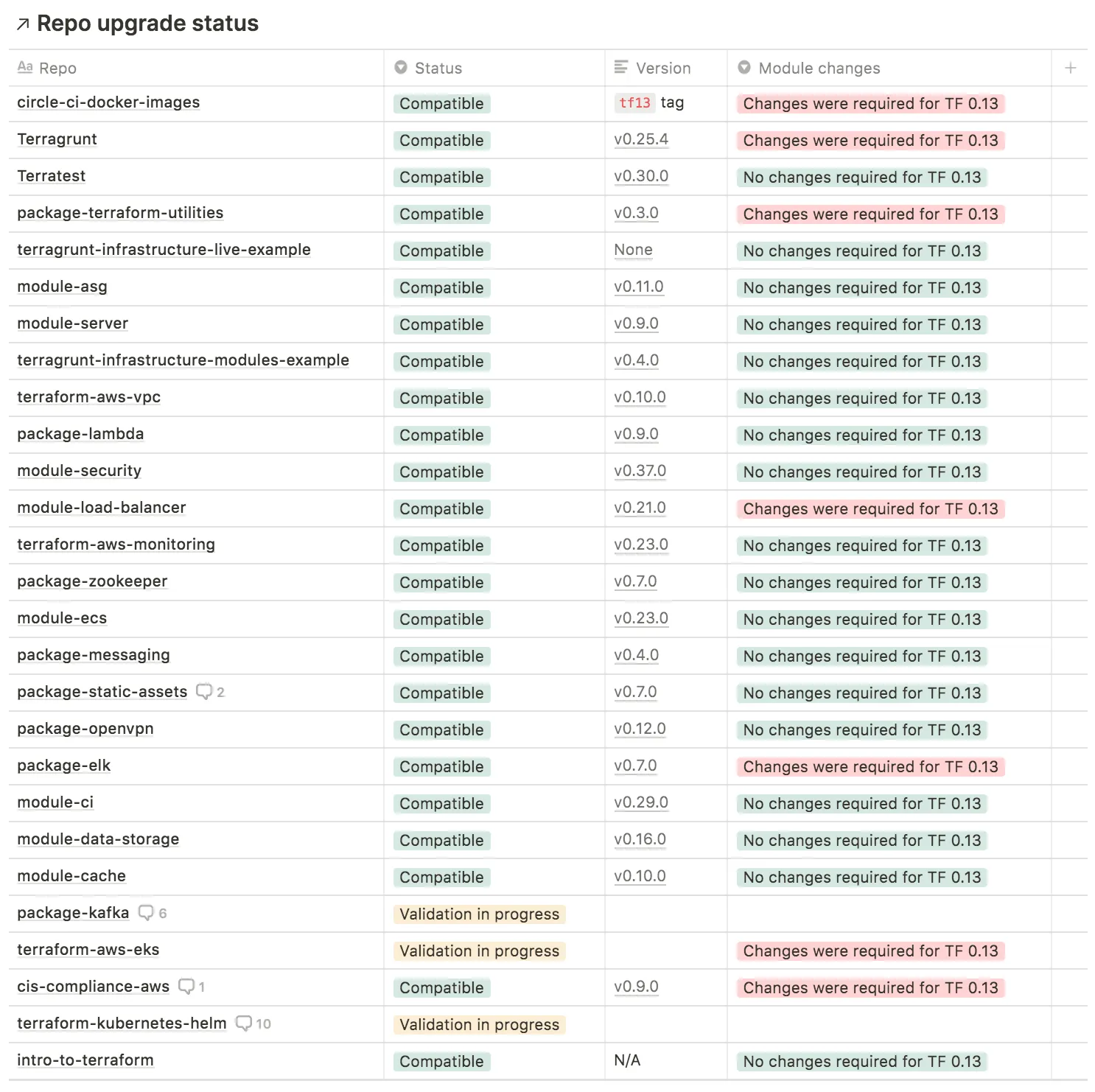

Solution: We’ve updated our entire Infrastructure as Code Library to work with AWS Provider 3.0.0. Here’s a snapshot of the compatibility table so you have a sense of what it takes to upgrade and test everything:

What to do about it: We’ve released a dedicated guide with instructions, a version compatibility table, and commits that show an example upgrade of the Acme Reference Architecture: How to update to version 3 of the Terraform AWS Provider. Please follow the steps in this guide to upgrade all your code ASAP!

Terraform 0.13.x upgrade

Motivation: A few months ago, HashiCorp released version 0.13.0 of Terraform. It brought lots of new features, but also included several backwards incompatible changes that made upgrading difficult.

Solution: We are currently working on testing and upgrading all of our repos to be compatible with Terraform 0.13.0. All repos have been upgraded except the following:

terraform-aws-eks: This repo was significantly affected by the backwards incompatible changes in 0.13.0, so some parts need to be rewritten, which will take some work. You can follow issue 221 for progress.GCP repos: We have been focusing on AWS first and have not yet started updating the Google Cloud Platform repos yet.

Here’s a snapshot of the compatibility table so you have a sense of what it takes to upgrade and test everything:

What to do about it: We recommend waiting to upgrade until (a) we finish the last remaining AWS repo and (b) publish a migration guide. However, if you’re not using terraform-aws-eks, and wish to upgrade immediately, you can check out the Gruntwork Terraform 0.13 Compatibility Table to see what version numbers you need to upgrade to.

CIS AWS Foundations Benchmark v1.3

Motivation: A few months ago, the Center for Internet Security (CIS) released version 1.3.0 of the AWS Foundations Benchmark. This introduced several new controls that we needed to incorporate into our Gruntwork Compliance for CIS product.

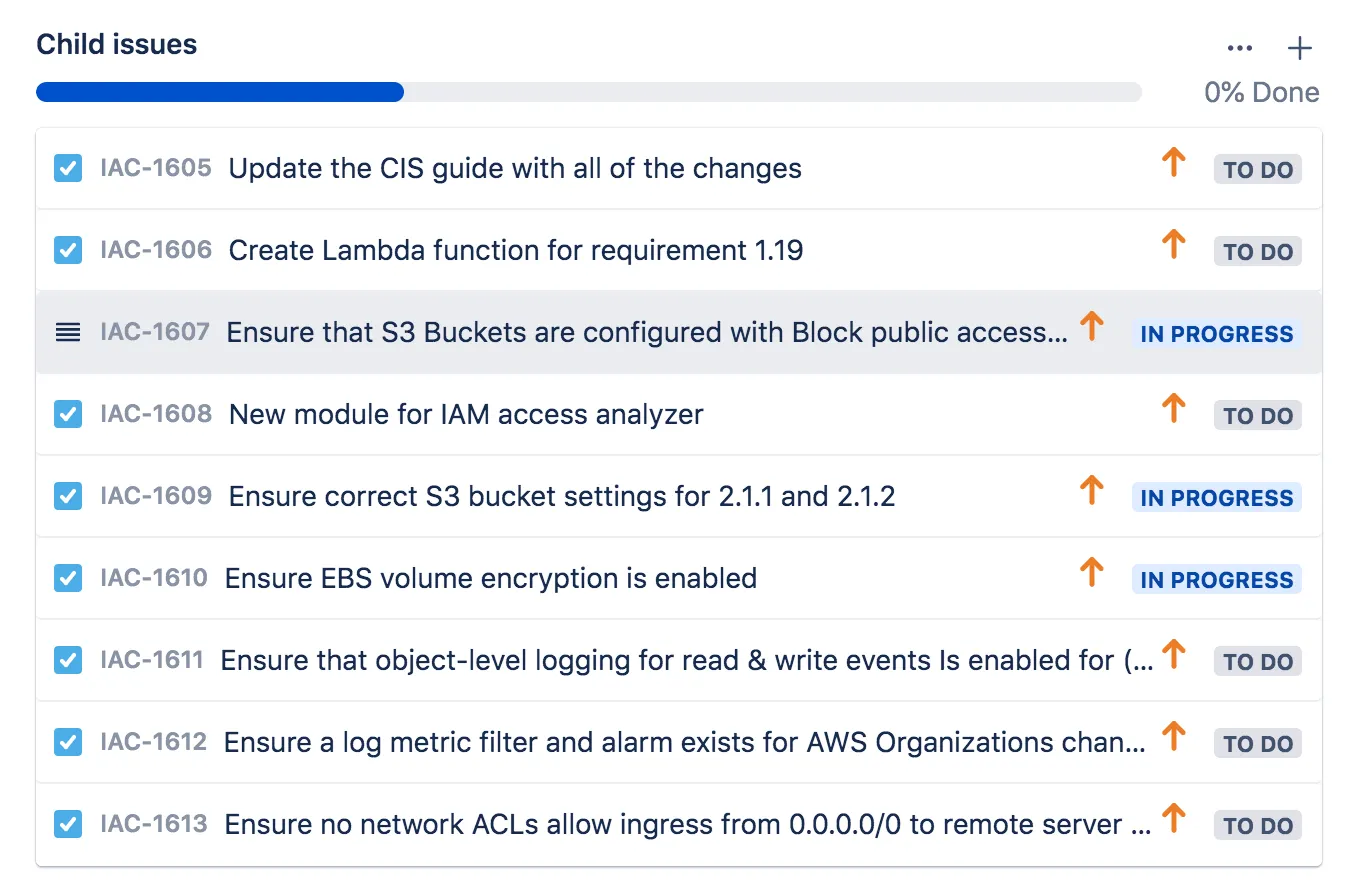

Solution: We are currently working on incorporating the new controls introduced in 1.3.0. Here’s a snapshot of the work in progress:

What to do about it: For now, sit tight! We will announce when everything has been upgraded and tested to be compatible with 1.3.0, and publish a migration guide to help you update.

Introducing: Commercial support for Terragrunt and Terratest

Motivation: Two of our open source projects, Terragrunt and Terratest, are growing more and more popular each month, with more and more companies relying on them in production, but the team that maintains them is still quite small.

Solution: We are now offering commercial support for Terragrunt and Terratest! This includes support via email, chat, and phone/video, code reviews, prioritized bug fixes, and prioritized feature development. If your company relies on Terragrunt or Terratest in production, you can now get help directly from the team that created these tools, and make sure you’re never blocked. And as a wonderful side benefit, we use your funds to support these open source tools and make them better for everyone.

What to do about it: Check out the Terragrunt Commercial Support and Terratest Commercial Support pages for more details, including the available pricing plans and SLAs. If you’re interested in more info, please contact us at [info@gruntwork.io](mailto:info@gruntwork.io?subject=Commercial support for Terragrunt and Terratest)!

New EKS module: aws-auth-merger

Motivation: Managing authentication and authorization for the Kubernetes API is one of the most basic operations you need to accomplish for EKS clusters. The official way to manage access to the Kubernetes API is to manage mappings from IAM roles and users to Kubernetes RBAC users and groups in a single, central ConfigMap. The central nature of the ConfigMap poses a challenge for certain use cases, especially when managing access with Terraform as there is no way to dynamically update the ConfigMap across multiple modules.

Solution: To support use cases where you want to break down the management of the aws-auth mapping ConfigMap, we implemented the eks-aws-auth-merger! This module allows you to break up the central ConfigMap across multiple, separate ConfigMaps each configuring a subset of the mappings you ultimately want to use, allowing you to update entries in the ConfigMap in isolated modules (e.g., when you add a new IAM role in a separate module from the EKS cluster). The aws-auth-merger watches for aws-auth compatible ConfigMaps that can be merged to manage the aws-auth authentication ConfigMap for EKS, and merges them together into the central ConfigMap everytime it detects a change.

What to do about it: Check out the deployment docs and take the aws-auth-mergerfor a spin!

New module: private-s3-bucket

Motivation: Gruntwork customers have been asking for a reusable module for creating an S3 bucket that implements best practices to keep the contents of the bucket secure and private.

Solution: We’ve created a new private-s3-bucket module that can be used to create an Amazon S3 bucket that enforces best practices for private access:

- No public access: all public access is completely blocked.

- Encryption at rest: server-side encryption is enabled, optionally with a custom KMS key.

- Encryption in transit: the bucket can only be accessed over TLS.

The module couldn’t be simpler to use:

module "s3_bucket" {

source = "git::git@github.com:gruntwork-io/module-security.git//modules/private-s3-bucket?ref=v0.40.1"

name = "my-example-bucket-name"

}

What to do about it: Give the private-s3-bucket module a shot and let us know what you think!

New module: ebs-encryption-multi-region

Motivation: It’s easy to enable encryption on Elastic Block Store volumes, but it’s just as easy to forget to do it. We want to make it easy to do the secure thing by default.

Solution: We’ve added a new ebs-encryption-multi-region module to module-security! This module will enable EBS encryption by default in all regions (or, optionally, a subset of regions). We’ve also incorporated EBS encryption by default in to the account-baseline-* modules in the Gruntwork Landing Zone.

What to do about it: Check out the ebs-encryption-multi-region example to get started.

How to Spoof Any User on Github…and What to Do to Prevent It

Motivation: While implementing a solution to improve the integrity of our GitHub code, we discovered a disturbing flaw in GitHub’s design that allows you to spoof any GitHub user when committing code.

Solution: Here’s the short version:

- By default, GitHub does no validation of commit authors, so by running

git config user.nameandgit config user.email, you can pretend to be any user you want! - To protect against this, you can sign your Git commits with a GPG key, and configure GitHub to require all commits to be signed.

For the full details, see our blog post.

What to do about it: Read the How to Spoof Any User on Github…and What to Do to Prevent It to learn how to sign your commits and considering configuring your GitHub org to require it!

Winter break, 2020

Motivation: At Gruntwork, we are a human-friendly company, and we believe employees should be able to take time off to spend time with their friends and families, away from work.

Solution: The entire Gruntwork team will be on vacation December 21st — January 1st. During this time, there may not be anyone around to respond to support inquiries, so please plan accordingly.

What to do about it: We hope you’re able to relax and enjoy some time off as well. Happy holidays!

Open Source Updates

Terragrunt

- v0.23.38: A further optimization is made to the dependency fetch optimization routine, where plugin installs are skipped.

- v0.23.39: This fixes dependency config loading for Windows.

- v0.23.40: We’ve added a new

aws-provider-patchcommand that can be used to override attributes in nestedproviderblocks. Refer to the command docs for more info. - v0.24.0: The

lock_tableattribute for the s3 remote state backend is now marked as deprecated and terragrunt will automatically convert it to the preferreddynamodb_tableattribute. This means that existing configurations using thelock_tableattribute will need to be reinitialized. - v0.24.1: Terragrunt will now automatically retry commands if it gets a “429 Too Many Requests” error from app.terraform.io.

- v0.24.2: Terragrunt will now automatically retry on several different flavors of the "429 Too Many Requests" error from app.terraform.io.

- v0.24.3: Terragrunt will now use the terragrunt download directory for setting up shallow dependency fetching as described in the dependency optimization documentation.

- v0.24.4: When creating an S3 bucket for state storage, Terragrunt will now add an IAM policy that only allows the S3 bucket to be accessed via TLS. You can disable this policy (NOT recommended!) via the

skip_bucket_enforced_tlssetting. - v0.25.0: Terraform 0.13 upgrade: We have verified that this repo is compatible with Terraform

0.13.x! - v0.25.1: The

sops_decrypt_filewill now cache decrypted files in memory while Terragrunt runs. This can lead to a significant speed up, as it ensures that each unique file path is decrypted at most once per run. - v0.25.2: Previously, Terragrunt logged detailed information about changes in the config for the

remote_stateblock, which could include secrets. Now these detailed change logs have been demoted todebuglevel and will only be logged if debug logging is enabled (environment variableTG_LOG=debug). - v0.25.3: You can now create your custom retryable errors via the

retryable_errorsconfiguration attribute. - v0.25.4: The

aws-provider-patchcommand now allows you to override attributes in nested blocks: e.g., override therole_arnattribute in anassume_role { ... }block. This is to work around yet another Terraform bug withimport. - v0.25.5: This release fixes a bug in the auto S3 bucket creation routine, where it will ignore the

skip_flags under certain circumstances where the backend configuration is modified (e.g., modifying the bucket name or object key location). - v0.26.0: This release contains a breaking change for the terragrunt configuration options affecting the logging ability for the remote state S3 bucket. It fixes a bug in the Auto Init routine for creating S3 buckets to store the terraform state, causing the logs to be generated and created by the same bucket in a constant loop, ending in noisy logs & potentially higher S3 costs.

Terratest

- v0.29.1: Add a new

GetTargetAzureSubscriptionmethod to find the correct target Azure Subscription ID. Make a number of changes to the internals of this repo, including moving all Azure examples and tests underazurefolders, and hooking up a test pipeline for Azure. - v0.30.0: We have verified that this repo is compatible with Terraform

0.13.x! From this release onward, we will only be running tests with Terraform0.13.xagainst this repo, so we recommend updating to0.13.xsoon! - v0.30.1: Fix high severity vulnerability CVE-2020–14001 in github-pages site generator for terratest documentation website.

- v0.30.2: This release introduces helper functions for getting ActionGroup resources in Azure:

GetActionGroupResourceandGetActionGroupResourceE. - v0.30.3: Add helper functions for getting and testing Availability Sets in Azure. Check out https://github.com/gruntwork-io/terratest/blob/master/modules/azure/availabilityset.go for the list of functions. Also add two new collections helper functions for strings sliced by a separator (

GetSliceLastValueE,GetSliceIndexValueE). - v0.30.4: This release introduces helper functions for testing and working with Azure Network Security Groups. See modules/azure/nsg.go for the list of available functions.

- v0.30.5: Adds helper functions to the

awspackage to create, get, and delete ECR repositories. - v0.30.6: This release adds helper functions for verifying network modules in Azure. Refer to modules/azure/networkinterface.go, modules/azure/publicaddress.go, and modules/azure/virtualnetwork.go for the list of new functions.

- v0.30.7: Adds a new function for users of

docker-compose run xxxto be able to output tostdout. Say you have a script that logs some output tostderrand some tostdout. If you only want to capturestdoutin your consumer, perhaps to examine and run a comparison on a string that your script generated, now you can do that without also capturing all the other output. See the example usage in the test. - v0.30.8: Added a new

GetEcsClusterWithIncludemethod you can use to fetch ECS cluster information and specify the data you wish the response to include (e.g., tags, settings, statistics, etc). - v0.30.9: This release adds helper functions for working with Azure SQL DB. Refer to https://github.com/gruntwork-io/terratest/blob/master/modules/azure/sql.go for the list of supported functions.

- v0.30.10: Introduces

k8s.NewTunnelWithLoggerwhich can be used to customize the terratest Logger used with the Kubernetes tunneling capabilities. - v0.30.11: Added a

GetDockerHostfunction that can be used to return the proper hostname to use for talking to services running in Docker. This is typicallylocalhost, but it can be overridden with theDOCKER_HOSTenvironment variable on some systems, which theGetDockerHostknows how to read and parse. - v0.30.12: This release adds helper functions for working with Azure VMs and Resource Groups. Refer to the following files for the list of supported functions: compute.go and disk.go and resourcegroup.go.

- v0.30.13: This release adds helper functions for working with Azure Load Balancer. Refer to loadbalancer.go for the list of supported functions.

- v0.30.14: Added new functions for checking DNS entries with terratest under the package

dns-helper. Refer to dns_helper.go for the list of available functions that you can use. - v0.30.15: This release introduces a new helper,

terraform.WithDefaultRetryableErrorsto return a newterraform.Optionsstruct with the retryable errors populated with a set of reasonable defaults. You can review the default list on the new var options.DefaultRetryableTerraformErrors. - v0.30.16: This release introduces additional support for Terraform plan testing. You can now run

terraform planandterraform show -jsonto introspect the plan. See the new terraform_aws_example_plan_test.go example test for an example of how to use the functionality. - v0.30.17: Fix a bug where the

Clonemethod interraform.Optionswas not properly handling theSshAgentandLoggerfields within the struct. We now shallow clone these values, as it doesn't make sense to deep clone a channel or logger, respectively. - v0.30.18: Added a new

k8s.WaitUntilSecretAvailablemethod that you can use to have your test code wait until a Kubernetes secret is present on the cluster. This is useful in cases where the secrets are not immediately available, as they are being provisioned asynchronously: e.g., when usingClusterIssuerto request a certificate. - v0.30.19: Fix an infinite loop in the

String()methods of two error structs in theterraformpackage:TgInvalidBinaryandOutputKeyNotFound. - v0.30.20: This release adds helper functions for working with Azure MySQL DB. Refer to mysql.go for the list of supported functions.

- v0.30.21: Fix deployment process so binaries are created on every release.

terraform-aws-nomad

- v0.6.5: Set default values for

availability_zonesandsubnet_idstonullinnomad-cluster. As of AWS Provider 3.x, only one of these parameters may be set at a time on an Auto Scaling Group, so we now have to usenullrather than empty list as our default. - v0.6.6: The

nomad-clustermodule now sets theignore_changeslifecycle setting onload_balancersandtarget_group_arnsattributes. - v0.6.7: The

nomad-clustermodule now provides output variables with information about the IAM instance profile:iam_instance_profile_arn,iam_instance_profile_id, andiam_instance_profile_name.

terraform-aws-consul

- v0.7.8: Fix

shellcheckwarnings ininstall-consul,install-dnsmasq, andrun-consul. - v0.7.9: Added a new

--verify-server-hostnameflag torun-consulthat, when set, enables server hostname verification as part of RPC encryption. - v0.7.10: For compatibility with AWS Provider 3.x, the

consul-clustermodule now sets theignore_changeslifecycle setting onload_balancersandtarget_group_arnsattributes. - v0.7.11: You can now specify a custom IP address for

dnsmasqto listen on using the new--dnsmasq-listen-addressoption. - v0.8.0: We have verified that this repo is compatible with Terraform

0.13.x!

terraform-aws-vault

- v0.13.9: Update this repo to work with AWS Provider 3.x. This includes defaulting

subnet_idsandavailability_zonestonullinstead of an empty list, as AWS Provider 3.x only allows one or the other of these parameters to be set. - v0.13.10: The

vault-clustermodule now provides output variables with information about the IAM instance profile:iam_instance_profile_arn,iam_instance_profile_id, andiam_instance_profile_name. - v0.13.11: The

vault-clustermodule now sets theignore_changeslifecycle setting onload_balancersandtarget_group_arnsattributes. - v0.14.0: We have verified that this repo is compatible with Terraform

0.13.x!

terraform-aws-influx

- v0.1.3: The modules in this repo are now compatible with AWS provider v3.

terraform-google-consul

- v0.5.0: This is a major release that adds support for rolling cluster updates, Consul 1.8.3 and a bunch of minor improvements.

terraform-google-gke

- v0.5.0: This release upgrades the examples and tests to use Helm 3 instead of Helm 2 with Tiller. It also adds support for adding resource labels to GKE clusters and fixes a bug where setting

var.enable_network_policytofalsewas causing the cluster creation to fail.

helm-kubernetes-services

- v0.1.3: You can now set additional labels on the

DeploymentandPodusing theadditionalDeploymentLabelsandadditionalPodLabelsinputs.

bash-commons

- v0.1.3: Introduce a new helper script that can be used to wait for apt locks to be released. This is useful in infrastructure setup scripts (e.g.

packer) where the nodes may start updating the packages as it is booting, preventing you from interacting withapt.

terraform-aws-couchbase

- v0.3.0: Updated this repo to work with the latest versions of Couchbase (6.x), Sync Gateway (2.x), and the Terraform AWS Provider (3.x).

- v0.4.0: We have verified that this repo is compatible with Terraform

0.13.x!

Kubergrunt

- v0.6.0: The

helmsubcommand was removed in this release, now that Helm v2 is scheduled for end of life. - v0.6.1: This introduces

kubergrunt eks sync-core-components, which can be used to roll out updates to the core components of an EKS cluster after upgrading the Kubernetes version. Refer to the AWS docs on the topic for more details. - v0.6.2: The VPC CNI version that

kubergrunt eks sync-core-componentswill upgrade to has been bumped to the latest version recommended by AWS (v1.7.5). - v0.6.3:

eks sync-core-componentsnow supports Kubernetes version 1.18. - v0.6.4:

eks verifyused to assumekubergruntwas installed in a location included in the PATH environment variable. Now it will use the currently executingkubergruntlocation.

cloud-nuke

- v0.1.21: The nuke routine for

s3has been optimized for memory efficiency. Previously the routine was paging in and storing all objects in the bucket in memory before starting the deletion routine. Now the routine will delete as objects are paged in. - v0.1.22: This introduces the ability to nuke

ecsclusterresources usingcloud-nukeand choose whether to apply the age filtering or not as described in thecloud-nukedocs. - v0.1.23: This extends the ability to nuke

ecsclusterresources to only target & nuke ECS clusters in anACTIVEstate.

fetch

- v0.3.11:

fetchwill now log the file count after extraction. It will also skip Git tags that don't follow SemVer. This allows you to use it with repos that use both SemVer and non-SemVer tags.

Other updates

terraform-aws-eks

- v0.22.1: Starting this release we will no longer use

kubergruntto get the OIDC provider thumbprint, and instead rely on terraform native functionality. - v0.22.2: The

eks-cluster-wokersmodule can now be configured to take in the external dependencies as variables instead of looking the info up dynamically. - v0.23.0: This release introduces the

eks-aws-auth-merger, which is an alternative toeks-k8s-role-mappingfor managing IAM role to RBAC group mappings. This module uses theaws-auth-mergertool to watch forConfigMapsin a specified namespace, and merge them together into theaws-authConfigMapat runtime. You can learn more about it in the module docs. - v0.23.1: You can now adjust the namespace where the core services are deployed into (

eks-cluster-control-plane,eks-alb-ingress-controller,eks-cloudwatch-container-logs,eks-k8s-cluster-autoscaler). - v0.23.2: The

eks-cluster-workersmodule will now gracefully handle situations where the IAM role is externally deleted. - v0.23.3: You can now optionally turn off the

eks-aws-auth-mergermodule using thecreate_resourcesvariable. - v0.23.4: Bump the

executable-dependencymodule version so that thekubergruntbinary that is downloaded properly has744permissions. - v0.24.0: Variables and outputs have been renamed in this release. Refer to the release notes for more info.

- v0.25.0: Switch to using the new location for the

cluster-autoscalerhelm chart so that the module continues to work after thestableandincubatorrepos are decommissioned in November. - v0.26.0: The automatic upgrade cluster feature now uses

kubergrunt eks sync-core-componentsinstead of an embedded script. This allows you to independently upgrade to newer EKS cluster versions as they are released without updating the module version. - v0.26.1: You can now configure the

triggerLoopOnEventsetting on theexternal-dnsservice. - v0.27.0: The

fluentdbased log shipping module (eks-cloudwatch-container-logs) has been deprecated and replaced by a new module based onfluent-bit. This supports additional targets such as Firehose and Kinesis in addition to Cloudwatch, while also being more efficient in terms of underlying resource usage. Refer to the migration guide for information on how to update. Additionally, the default Kubernetes version used by the module has been updated to 1.18. Note that you willkubergruntv0.6.3 or newer if you wish to upgrade your existing EKS clusters to Kubernetes version 1.18. - v0.27.1: Gracefully handle

use_existing_cluster_config = falseanduse_cluster_security_group = true. - v0.27.2: Fix a bug in the

eks-container-logswhere Elasticsearch output was being enabled by default. This also fixes a bug where the boolean encoding in the helm chart values were incorrect. Also expose the ability to configurepod_resourcesfor the DaemonSet ineks-container-logs.

module-ecs

- v0.21.2: Set a

default_capacity_provider_strategywhen providing capacity providers for the ECS cluster. - v0.21.3: You can now use the

cluster_asg_metrics_enabledvariable to specify the metrics to collect for the ASG deployed via theecs-clustermodule. - v0.21.4: You can now specify the launch type for the

ecs-daemon-servicemodule via the newlaunch_typeinput variable. - v0.21.5: Fix bug in the deployment check scripts that made it incompatible with

awsvpcnetworking mode on EC2 based ECS clusters. - v0.21.6: Update comment and readme for to reflect current

roll-out-ecs-cluster-update.pyfunctionality. - v0.22.0: When updating this repo to work with AWS Provider 3.x in v0.21.0, we missed a

required_providerconstraint in theecs-daemon-servicemodule, so it was still pinned to AWS Provider 2.x. This release fixes that. - v0.23.0: We have verified that this repo is compatible with Terraform

0.13.x!

module-ci

- v0.27.3:

build-packer-artifactnow supports a new--idempotentflag. When set astrue(e.g.--idempotent true), thebuild-packer-artifactscript will search your AWS account for an AMI that matches the template, and if it exists, will not attempt to build a new AMI. This is useful for preserving the integrity of AMI versions in CI/CD workflows. - v0.28.0: You can now specify repo restrictions as regex using

allowed_repos_regexandinfrastructure_live_repositories_regexinput variables. - v0.28.1: This release fixes a major regression bug identified in the previous release (

v0.28.0), where omittingallowed_repos_regexfor theami_builderin theecs-deploy-runner-standard-configurationmodule would inadvertently allow building from any repo. - v0.28.2: Adds a new flag,

--idempotent, to thebuild-docker-imagetool in the Kaniko image of the ecs-deploy-runner. Invoking the build-docker-image tool with the flag will cause it to check for the existence of an image before building and pushing. - v0.28.3: Updated

setup-minikubeto support newer minikube versions and kubernetes versions. - v0.28.4:

infrastructure-deploy-scriptnow supports running therefreshcommand. - v0.28.5: This is a maintenance release that exports some test helper functions for the ecs-deploy-runner as a new package under

test/edrhelpers. This allows the helpers to be used by other projects. - v0.29.0: We have verified that this repo is compatible with Terraform

0.13.x! - v0.29.1: You can now configure the ECS deploy runner with repository credentials for pulling down the images using the new

repository_credentials_secrets_manager_arninput var.

cis-compliance-aws

- v0.6.0: Starting this release, tests are run against v3.x series of the AWS provider. Note that this release is backwards compatible with v2.x of the AWS provider. However, there is no guarantee that backwards compatibility with v2.x of the AWS provider will be maintained going forward.

- v0.7.0: This release has a critical vulnerability where the cloudtrail logs will lose encryption for cross account setups. Use

v0.7.1instead. Updates thecross-account-iam-rolesmodule to include a support role, which is necessary for compliance with the CIS AWS Foundations Benchmark. Also removes theaws_account_idvariable from thecloudtrailmodule and updates that module to use the latest version frommodule-security. - v0.7.1: Expose ability to specify an existing KMS key for encrypting cloudtrail logs. This fixes a critical vulnerability with

v0.7.0where you lose the configured KMS key encryption when shipping logs to an external account. - v0.8.0: Switch the

aws-securityhubmodule from using a Python script to associate new member accounts to using the newaws_securityhub_memberresource. - v0.8.1: Bumps the

custom-iam-entitymodule to use the latest version from module-security, which includes an improvement to MFA support for IAM roles. - v0.9.0: We have verified that this repo is compatible with Terraform

0.13.x! Note that as of this release, theaws-securityhubmodule will no longer automatically clean up associations with master accounts when you rundestroy.

package-openvpn

- v0.11.1: We now enable server-side encryption by default and add explicit rules to deny any possible public access for the backup S3 bucket.

- v0.12.0: We have verified that this repo is compatible with Terraform

0.13.x!

terraform-aws-vpc

- v0.9.4: This is a minor update that fixes a perpetual diff in the

vpc-flow-logsmodule caused by the new AWS provider v3 chopping the:*off the CloudWatch Logs Group ARN. - v0.10.0: We have verified that this repo is compatible with Terraform

0.13.x! - v0.10.1: The VPC modules now gracefully handles

num_availability_zonesvalues that are greater than the number of AZs in the region. - v0.10.2: Add DynamoDB VPC endpoints to the

vpc-mgmtmodule. We already had these endpoints invpc-app, but somehow must've forgotten to add them tovpc-mgmt. Propagate the tags from thecustom_tagsinput variable invpc-appandvpc-mgmtto all VPC endpoints. This ensures more consistent tagging for all resources created by these modules.

module-security

- v0.36.5: Fix regression bug in

aws-authwhere the command broke for MFA token session retrieval without role assume. - v0.36.6: Fixes the ARN for the

AmazonSSMManagedInstanceCoremanaged policy, which was previously incorrect. - v0.36.7: Fix a bug in the outputs for

aws-config-rulesintroduced byv0.36.0. - v0.36.8: Adds the

rds:Download*permission to the Read Only policy in theiam-policiesmodule. - v0.36.9: This release removes the CloudTrail S3 bucket policy from the

aws_s3_bucketresources. The policy is already attached via a separateaws_s3_bucket_policyresource, hence the attachment in theaws_s3_bucketwas redundant. - v0.36.10: Adds

eks:Describe*andeks:List*permissions to the Read Only IAM policy. - v0.37.0: We have verified that this repo is compatible with Terraform

0.13.x! - v0.37.1: The

configure-fail2ban-cloudwatch.shscript will now restartfail2banafter configuring the cloudwatch metrics actions. - v0.38.0: This is a cleanup release that removes several unused variables and fixes a few other small issues.

- v0.38.1: Updates the

AWSConfigRolemanaged policy in theaws-configandaws-config-multi-regionmodules to the newAWS_ConfigRolemanaged policy due to a deprecation notice from AWS. - v0.38.2: The

account-baseline-xxxmodules now allow you to configure the IAM password policy settings of allowing users to change their own password and whether password expiration requires an admin reset using the new input variablesiam_password_policy_allow_users_to_change_passwordandiam_password_policy_hard_expiry, respectively. - v0.38.3: Fix bug where

fail2bancloudwatch configuration script used the incorrect command for restartingfail2banon Amazon Linux 1. - v0.38.4: You can now specify tags to apply to CloudTrail and IAM Role resources created by the

account-baseline-xxxmodules using the new input variablescloudtrail_tagsandiam_role_tags, respectively. - v0.39.0: Fix a bug where

account-baseline-rootdid not work correctly if none of the accounts inchild_accountshadis_logs_accountset totrue. - v0.39.1: Updates the recently released

private-s3-bucketmodule to allow the bucket ACL to be configurable, and updatescustom-iam-entityto enforce MFA on the trust policy when creating custom roles. - v0.39.2: The

private-s3-bucketmodule had a bug that made it impossible to configure bucket replication. This release fixes the bug. - v0.40.0: The

cloudtrail-bucketmodule has been refactored to use theprivate-s3-bucketmodule under the hood to configure the cloudtrail S3 bucket. In the process, the module has been updated so that it will now configure the bucket to default to encrypting objects with the newly created KMS key, or the provided KMS key if it already exists. - v0.40.1: Updates the recently released

private-s3-bucketmodule to allow the server side encryption algorithm to be configurable - v0.40.2: Adds the new

ebs-encryption-multi-regionmodule mentioned above. - v0.41.0: The

aws-organizationsandaccount-baseline-rootmodules now outputorganization_root_id. Theaws-config-multi-regionmodule can now configure default AWS Config rules (those defined by theaws-config-rulesmodule) in every region AWS Config is enabled. Theaws-config-rulesmodule can now separately apply rules related to global resources such as IAM using the newenable_global_resource_rulesvariable. Additional parameters for managingaws-config-rulesare now exposed in the account baseline modules. - v0.41.1: Fix bug where the default value for

ebs_kms_key_namemust be"", notnullfor theaccount-baseline-securitymodule.

package-zookeeper

- v0.7.1: You can now specify the maximum number of retries during a rolling upgrade using the

zookeeper-clustermodule. - v0.6.8: You can now enable encryption for the root block device in

zookeeper-clusterusing the newroot_block_device_encryptedinput variable. - v0.6.9: You can now enable server-side encryption on the S3 bucket used by Exhibitor by setting

shared_config_s3_bucket_encryptioninput variable totrueand optionally providing a custom KMS CMK to use via theshared_config_s3_bucket_kms_key_arninput variable.

package-sam

- v0.2.2: You can now set stage variables using the new

stage_variablesinput variable, customize the lambda permission statement ID using the newxxx_lambda_permission_statement_idinput variable, and set a qualifier on the lambda permission using the newxxx_lambda_qualifierinput variable. - v0.2.3:

gruntsamnow supportsOPTIONSrequests. - v0.3.0: We have verified that this repo is compatible with Terraform

0.13.x! - v0.3.1: Added the

create_before_destroy = truelifecycle setting to theaws_api_gateway_deploymentresource to work around intermittent "BadRequestException: Active stages pointing to this deployment must be moved or deleted" errors.

terraform-aws-monitoring

- v0.22.2: You can now enable server-side encryption for the S3 bucket used to store load balancer access logs using the new

s3_bucket_encryptioninput variable. - v0.23.0: We have verified that this repo is compatible with Terraform

0.13.x! - v0.23.1: This updates

install-cloudwatch-monitoring-scripts.shto set cache removal on reboot so that any cached info about the instances are reset on every boot. - v0.23.2: Fix a bug in the

alb-target-group-alarmsmodule, switching the module to use"Seconds"instead of"Count"as the proper unit for theTargetResponseTimealarm. - v0.23.3: The

rds-alarmsmodule will now only create the replication error alarm if there is more than one RDS instance (that is, if there are actual replicas to alert about!).

package-elk

- v0.6.0: This release updates the modules to be compatible with AWS provider 3.x. This release also updates the default Elasticsearch version to

6.8.12. - v0.7.0: We have verified that this repo is compatible with Terraform

0.13.x! Also, we fixed a bug withfilebeatwhere it was trying to start before we had a chance to configure it with auto-discovery settings.

package-messaging

- v0.3.5: The

snsmodule now allows you to grant AWS Services (e.g.,events.amazonaws.com) permissions to write to your SNS topic using the newallow_publish_servicesinput variable. Also, fixed a bug where thetopic_policyoutput variable used to only return default policy of the SNS topic. It will now return the full topic policy as created by thesnsmodule.

package-terraform-utilities

- v0.3.0: We have verified that this repo is compatible with Terraform

0.13.x! Note that due to the upgrade, therun-pex-as-resourcemodule no longer supports running code ondestroy.

module-server

- v0.9.0: Terraform 0.13 upgrade: We have verified that this repo is compatible with Terraform

0.13.x! - v0.9.1: You can now specify the principals that will be allowed to assume the IAM role created by the

single-servermodule. This can be useful, for example, to override the default from["ec2.amazonaws.com"]to["ec2.amazonaws.com.cn"]when using the AWS China region. - v0.9.2: Fixed CentOS

attach-enibug depending on the CentOS version and AWS instance type. - v0.9.3: You can now specify a custom private IP address for your EC2 instance using the new

private_ipinput parameter in thesingle-servermodule.

package-lambda

- v0.9.0: Terraform 0.13 upgrade: We have verified that this repo is compatible with Terraform

0.13.x! - v0.9.1: You can now set the new

source_code_hashinput variable to the hash of the zip file you upload to S3 as a way to allow thelambdamodule to know when that Zip file has changed, and therefore, when the Lambda function needs to be redeployed. - v0.9.2: We’ve added an option to set an outbound rule in the Lambda security group that will permit outbound access from the function. To automatically create a rule allowing access to

0.0.0.0/0, setshould_create_outbound_rule=truewhen calling the lambda module. - v0.9.3: The

lambdamodule now allows you to mount an EFS file system in your Lambda functions using the newmount_to_file_system,file_system_access_point_arn, andfile_system_mount_pathvariables.

module-data-storage

- v0.16.0: Terraform 0.13 upgrade: We have verified that this repo is compatible with Terraform

0.13.x! - v0.16.1: Add

lifecycleblock to ignore changes tosnapshot_identifierso that restored DB clusters won't get destroyed during updates. - v0.16.2: You can now enable the HTTP endpoint for the Data API on Aurora Serverless using the new ‘enable_http_endpoint’ input variable.

- v0.16.3: You can now configure IAM roles for the

redshiftmodule to use via the newiam_rolesinput variable.

module-cache

- v0.10.1: You can now restore a Redis cluster from a snapshot using the new

snapshot_nameorsnapshot_arninput variables.

package-beanstalk

- v0.1.1: You can now specify the load balancer type to use in the

elasticbeanstalk-environmentmodule by using the newload_balancer_typeinput variable.

module-load-balancer

- v0.23.2: Fix a bug in the

alb-target-group-alarmsmodule, switching the module to use"Seconds"instead of"Count"as the proper unit for theTargetResponseTimealarm.

DevOps News

Kubernetes updates: version 1.18 and the Load Balancer Controller

What happened: A couple updates for those of you using Kubernetes: first, EKS now supports Kubernetes 1.18. Second, AWS has renamed the ALB Ingress Controller to the Load Balancer Controller, which now supports the NLB as well.

Why it matters: Here are the highlights:

- Kubernetes 1.18: This release incluldes the Topology Manager reaching beta status, a new beta of Server-side Apply, and a new IngressClass resource for the Ingress specification which makes it simpler to customize Ingress configuration. Additionally, you can now configure the behavior of horizontal pod autoscaling.

- Load Balancer Controller: The new controller includes a number of improvements, including allowing you to manage ALBs or NLBs, allowing sharing a single ALB across multiple applications in your Kubernetes cluster, as well as using a NLBs with Fargate.

What to do about it: Check out the Kubernetes 1.18 and Load Balancer Controller announcement blog posts for more details. Note that we added support for Kubernetes 1.18 in our terraform-aws-eks repo in v0.27.0.

New HashiCorp tools: Boundary and Waypoint

What happened: HashiCorp has released two new open source projects: Boundary and Waypoint.

Why it matters: Here’s the short version of what these new tools offer:

- Boundary: A tool for managing access to your servers based on existing identity providers. This is potentially an alternative to VPN and SSH software.

- Waypoint: A tool for giving developers a consistent experience for building, deploying, and releasing code, via a single CLI API—

waypoint up—that under the hood can work with a variety of tools, platforms, and workflows (e.g., Kubernetes, ECS, Docker Hub, ECR, CircleCi, GitHub Actions, etc).

What to do about it: Check out the Boundary and Waypoint announcement blog posts for all the details, give these new tools a shot, and let us know what you think of them!

Docker Hub Rate Limting

What happened: Docker has announced that they will now start rate limiting pulls from Docker Hub.

Why it matters: If you use docker pull or docker run (which uses docker pull under the hood) to pull an image from Docker Hub, Docker will now start enforcing a fairly low rate limit. According to the pricing page, you’re limited to just 100 pulls per 6 hour period for anonymous users, and 200 pulls per 6 hour period for authenticated users on the free plan.

What to do about it: This is most likely to affect CI servers, so look into your pipelines to see how often you’re pulling images, whether those images are being cached locally, and if you need to authenticate your machine user to Docker Hub, or even upgrade to a paid plan. Alternatively, Amazon has announced they will be working on a free, public registry, so you may consider switching to that when it’s available.

Security Updates

Below is a list of critical security updates that may impact your services. We notify Gruntwork customers of these vulnerabilities as soon as we know of them via the Gruntwork Security Alerts mailing list. It is up to you to scan this list and decide which of these apply and what to do about them, but most of these are severe vulnerabilities, and we recommend patching them ASAP.

Apple

- Apple released a set of security patches for iOS, iPadOS, and macOS that protects against critical vulnerabilities that are known to be exploited in the wild. These vulnerabilities allow an attacker to do remote code execution on the platform if you installed a malicious font file or application. We strongle recommend updating your Apple devices to the latest versions of the OS. Refer to the iOS/iPadOS 14.2 security update notes and the macOS 10.15.7 security update notes for more details.

Github Actions

- CVE-2020–15228: Github issued an advisory for an injection attack vulnerability with Github Actions. The vulnerability is in how Github Actions parses STDOUT of actions to look for and run workflow commands. This can be exploited to inject workflow actions such as

set-envto modify the environment variable of the running action. For example, if you have a workflow action that outputs the contents of a Github issue title to STDOUT, an attacker can create an issue with a specially crafted title to inject workflow commands in the running action without modifying any code. We strongly recommend updating your Github Actions to avoid logging untrusted information. Refer to this blog post from Github on the options for remediating this vulnerability, including how to update your actions to still allow logging the information.

HashiCorp Vault

- CVE-2020-16250: A critical vulnerability has been found in HashiCorp Vault, an open source tool used for managing secrets, such as passwords, API keys, and TLS certs. The vulnerability is in the IAM authentication methods for AWS and GCP and may allow an attacker to spoof arbitrary IAM identities, and access arbitrary secrets. Fixes are available in Vault versions 1.2.5, 1.3.8, 1.4.4 and 1.5.1. We strongly recommend updating immediately. For more details, see Enter the Vault: Authentication Issues in HashiCorp Vault, CVE-2020–16250, and the Vault CHANGELOG. We notified the Gruntwork Security Alerts mailing list about this vulnerability on October 8, 2020.

- No-nonsense DevOps insights

- Expert guidance

- Latest trends on IaC, automation, and DevOps

- Real-world best practices