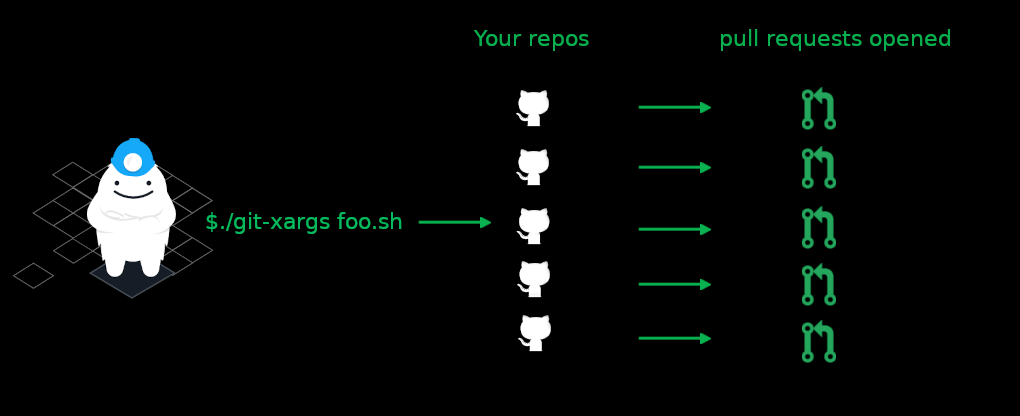

Today we’re open sourcing git-xargs, a command-line tool (CLI) for making updates across multiple Github repositories with a single command. You give git-xargs a script to run and a list of repos, and it checks out each repo, runs your scripts against it, commits any changes, and opens pull requests with the results. At the end of each run, you get a detailed report on exactly what happened with each repo:

For example, have you ever needed to add a particular file across many repos at once? Or to run a search and replace to change your company or product name across 150 repos with one command? What about upgrading Terraform modules to all use the latest syntax? How about adding a CI/CD configuration file, if it doesn’t already exist, or modifying it in place if it does, but only on a subset of repositories you select?

You can handle these use cases and many more with a single git-xargs command. Just to give you a taste, here’s how you can use git-xargs to add a new file to every repository in your Github organization:

git-xargs \

--branch-name add-contributions \

--github-org my-example-org \

--commit-message "Add CONTRIBUTIONS.txt" \

touch CONTRIBUTIONS.txt

In this example, every repo in the my-example-org GitHub org have a CONTRIBUTIONS.txt file added, and an easy to read report will be printed to STDOUT :

In this blog post, I’m going to cover the following topics:

- Example use cases for this tool

- Why we built git-xargs

- How git-xargs works under the hood

- How you can get started with git-xargs quickly

Let’s look at some example use cases for git-xargs

In the following sections we’ll take a look at some of the use cases we found git-xargs useful for tackling internally, as well as some suggested tasks it would be well-suited for in the wild.

Use case: updating the copyright year for all your license files

- This script will create a LICENSE.txt file, if it doesn’t exist already, and put the MIT license in it.

- If a LICENSE.txt file already exists, it will update the copyright year.

add-or-edit-license.sh view raw

Use case: Updating CircleCi contexts (or other YAML files) across repos

We use CircleCI extensively, and we wanted to begin leveraging their new contexts feature so that we could increase the security and control of our build projects.

Using contexts is more maintainable and secure than copying and pasting the same secrets as environment variables throughout every repository that needs access to the same credentials, such as AWS keys in order to run Terratest integration tests on every build.

By having all of our projects use a single CircleCI context, we could easily rotate our secrets in one place and have all of our projects pick up the change instantly. However, to get this working we also needed to update our CircleCI workflows syntax version to 2.0 across all our repos, as this is the earliest version that introduced support for contexts. Imagine our YAML files looked something like this:

To accomplish this, we leveraged Mike Farah’s excellent yq tool to programmatically update all our .circleci/config.yml files. This script is a simplified version that shows how you might bump your CircleCI workflows to the version that supports contexts, but you can imagine how you could easily extend this to add or rotate CircleCI contexts, etc.

circle-ci-workflows-upgrade.sh view raw

Use case: Rename all your repositories to follow a new pattern

We recently renamed all of our repos to be compliant with Hashicorp’s Terraform Cloud, which required that every repo be prefixed with terraform-aws-<module-name> . Without git-xargs this would have been a pretty painful exercise given the scope of our Terraform library.

After we renamed all our repositories through the Github UI, we still needed to update all our internal references throughout our source code to point to the updated repo names (in READMEs, code and URLs). We were able to write one Bash script that did this for us:

update-all-repo-names.sh view raw

Why did we build git-xargs?

Why did we build this? At Gruntwork we maintain over 150 repositories, containing hundreds of thousands of lines of code. Thousands of developers rely on this code in production.

This means a large part of what we do is keep our code, especially our Infrastructure as Code (IaC) library, up to date with best practices, new releases (e.g.; new Terraform versions), and security patches. In addition to constantly shipping improvements and new features for our IaC library and service catalog, we also have to stay on top of maintenance tasks that are constantly cropping up.

And we do all of this with a team of less than 20 engineers! git-xargs helps us to more efficiently and quickly perform tasks that require updating many of our repositories at once.

How git-xargs works

git-xargs allows you to run a script (or multiple scripts, e.g., Bash, Ruby, Python) against 5, 50, or 150 repos at once! You can select the exact repos you want to run it against either by supplying the --github-org flag to match every repo in your Github org, or by providing a flatfile that explicitly lists which repos you want it to operate on (see below for an example).

git-xargswill clone each of your selected repos to your machine to the/tmp/directory of your local machine.- it will checkout a local branch (whose name you specify)

- it will run all your selected scripts against your selected repos

- it will commit any changes in each of the repos (with a commit message you can optionally specify)

- it will push your local branch with your new commits to your repo’s remote

- it will call the Github API to open a pull request with a title and description that you can optionally specify. If you don’t specify these, git-xargs will use your commit-message for the PR title and description, if you provide one, or fall back to defaults for all 3, if you don’t.

- it will print out a detailed run summary to STDOUT that explains exactly what happened with each repo and provide links to successfully opened pull requests that you can quickly follow from your terminal. If any repos encountered errors at runtime (whether they weren’t able to be cloned, or script errors were encountered during processing, etc) all of this will be spelled out in detail in the final report so you know exactly what succeeded and what went wrong.

git-xargs does all this using goroutines, so it is pretty fast, as it runs against multiple repos concurrently.

Additional ideas to implement as scripts for git-xargs

Here are some other starter ideas for scripts that would be good candidates to run via git-xargs :

- Modify package.json files in-place across repos to bump a common node.js depdency using

jqhttps://stedolan.github.io/jq/ - Update your Terraform module library from Terraform

0.13to0.14. - Remove stray files of any kind, when found, across repos using

findand itsexecoption - Add a new file of any kind with conditional contents to repos using heredoc syntax: https://stackoverflow.com/questions/2953081/how-can-i-write-a-heredoc-to-a-file-in-bash-script

- Rename every instance of a company or product name that has changed using

sed - Add baseline tests to repos that are missing them by copying over a common local folder where they are defined

- Refactor multiple Golang tools to use new libraries by executing

go getto install and uninstall packages, and modify the source code files’ import references

Give it a shot and let us know what you think, and contribute some scripts!

Please give git-xargs a shot and let us know what you think! Grab a copy of the binary from the git-xargs releases page, give it execute permissions, and run --help to get started:

If you have a good script that you believe is generic enough to be of use to many other people, please open a pull request against our scripts directory so that others may benefit from it!

If you find bugs or have ideas for ways we could extend and improve it, please feel free to file a Github issue.

- No-nonsense DevOps insights

- Expert guidance

- Latest trends on IaC, automation, and DevOps

- Real-world best practices