Adventures with Terraform, AWS Lambda, and KMS

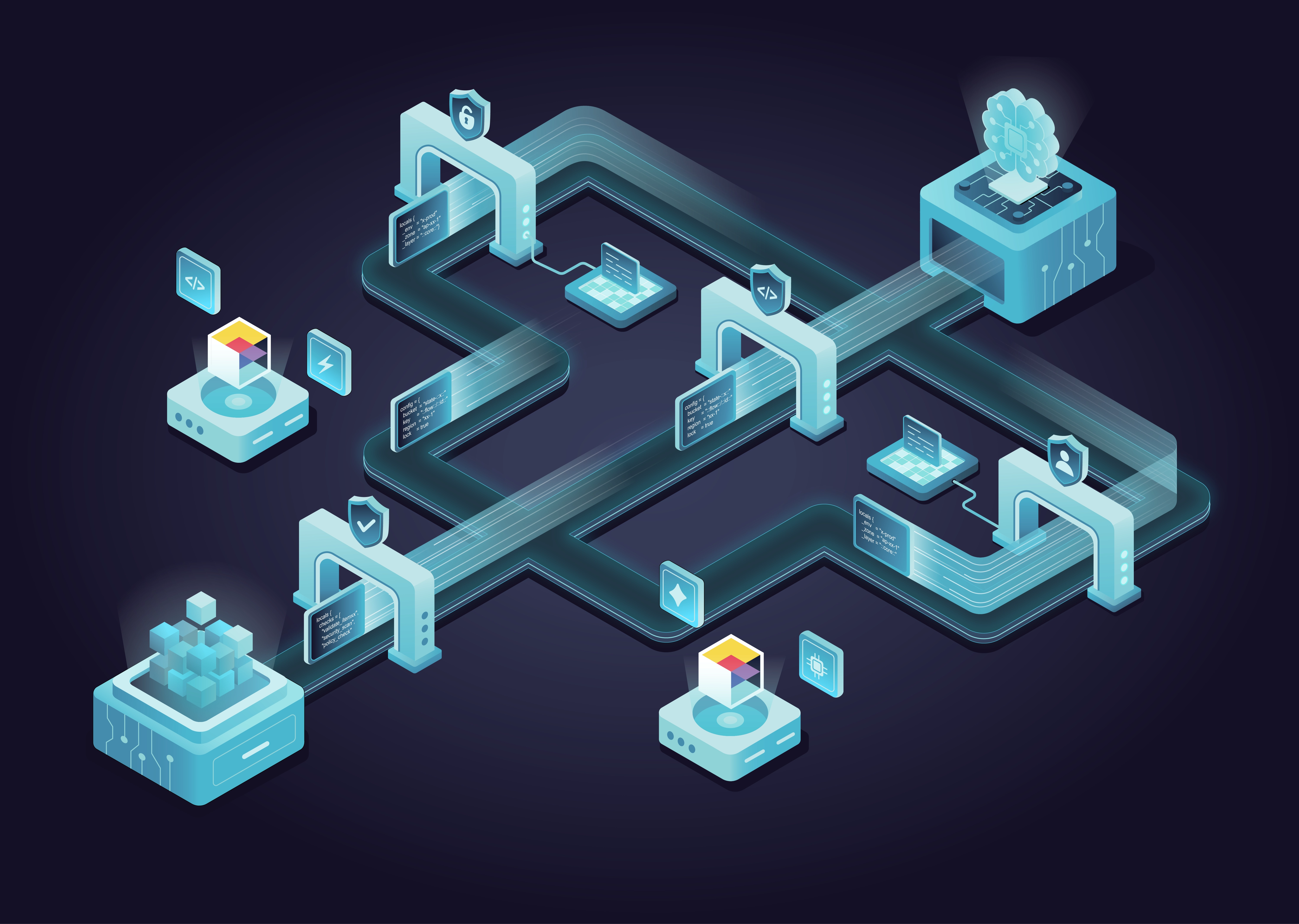

This is the second entry in The Yak Shaving Series, where we share stories of some of the unexpected, bizarre, painful, and time-consuming problems we’ve had to solve while working on DevOps and infrastructure. In the first entry, we shared a horror story featuring Auto Scaling Groups, EBS Volumes, Terraform, and Bash. In this entry, we’ll talk about the unexpected errors you run into while using Terraform, AWS Lambda, and Amazon’s Key Management Service (KMS).

At Gruntwork, we make heavy use of Terraform to define and manage our infrastructure as code. For the most part, we love Terraform—we even wrote a whole blog post series and book about it! But sometimes, it becomes painfully obvious that Terraform is a pre-1.0 tool, and not particularly mature. This is the story of one of these times.

Error #1: Access to KMS is not allowed

It all started as a normal, happy day. I was using one of the apps we have running in a test AWS account and noticed a seemingly innocuous error in the logs:

This app stores secrets (such as API Keys and database passwords) in AWS Secrets Manager, and this short and unhelpful error message seemed to indicate that the app was unable to read one of its secrets. We had recently changed the way we managed secrets and permissions in this app, and as the test environment was running a slightly older version of the app, I figured all I needed to do was deploy a new version.

This app is built on top of AWS Lambda, so there are no servers to deploy or manage. We have a reusable Terraform module for deploying to AWS Lambda in just a few lines of code:

module "app" {

source = "git::git@github.com:gruntwork-io/package-lambda.git//modules/lambda?ref=v0.3.0"

name = "my-app"

description = "An app deployed with AWS Lambda"

source_path = "${local.source_path}"

runtime = "nodejs"

handler = "index.handler"

timeout = 30

memory_size = 2048

environment_variables = {

secret_name = "${local.secret_name}"

foo = "${local.foo}"

bar = "${local.bar}"

}

}

Normally, deploying a new version is as easy as running:

But this was not going to be an easy day.

Error #2: ResourceConflictException

When I ran terraform apply, I got my second error of the day:

I Googled this error and found an issue in the serverless framework with the exact same error message. We were using Terraform to deploy our Lambda functions and not the serverless framework, but the error looked identical, and the suggested workaround was to delete the function and create it from scratch. This didn’t seem like a good way to solve the problem long-term, but as this was our test environment, I figured this would be the quickest way to move forward, and ran:

This turned out to be a huge mistake.

Error #3: RequestError

As luck would have it, I was at a hotel with a crappy WiFi connection when I ran destroy, and in the middle of the destroy run, the connection died. As a result, Terraform failed to (1) destroy everything, (2) release the lock, and (3) save state:

Failed to save state: Failed to upload state: RequestError: send request failed

caused by: Put https://<redacrted>.amazonaws.com/<redacted>: transport connection broken: <redacted> write: connection reset by peer

Perhaps with the recently-released Terraform remote backend, this sort of issue could’ve been avoided (though this does require you to use Terraform Enterprise), or, simpler still, it would be great if Terraform incorporated a simple retry mechanism. I had neither of these options, so instead, I had to do a bunch of state surgery using terraform state push, terraform force-unlock, terraform state rm, and so on. Eventually, I got it working, and terraform destroy completed successfully.

Now that the app was undeployed, it was time to redeploy it:

What could possibly go wrong?

Error #4: ‘count’ cannot be computed

Apparently, a lot. Even though the app had previously been deployed without issues, trying to deploy it again led to an error:

value of 'count' cannot be computed

This is an infamous Terraform limitation (just look at all the bugs filed about it) where the count parameter—which, a bit like a for-loop, can be used to create multiple copies of a resource—is not allowed to reference any computed value, such as the output of a resource. Worse yet, the error message provides very little context, and as I didn’t have any count parameters directly referencing resources, I had to do a lot of digging (mostly by commenting out large chunks of my Terraform code, binary chop style) to find the culprit.

That culprit ended up being the source_path parameter:

module "app" {

source = "git::git@github.com:gruntwork-io/package-lambda.git//modules/lambda?ref=v0.3.0"

name = "my-app"

description = "An app deployed with AWS Lambda"

# This was the problem!

source_path = "${local.source_path}"

runtime = "nodejs"

handler = "index.handler"

timeout = 30

memory_size = 2048

environment_variables = {

secret_name = "${local.secret_name}"

foo = "${local.foo}"

bar = "${local.bar}"

}

}

In the code for this app, we were setting local.source_path to a value that, through a few layers of indirection, eventually referenced the output of a resource; in the code for the lambda module, source_path was being used in a count parameter.

It took quite a bit of refactoring to remove the resource dependency from local.source_path, but I eventually got it done, ran terraform apply once more, and prayed…

Error #5: data.external does not have attribute

The answer to my prayers was yet another error:

Resource 'data.external.add_config' does not have attribute

'result.path' for variable 'data.external.add_config.result.path'

Part of the code for this app was using an external data source to execute a small script to dynamically generate a config file:

data "external" "add_config" {

program = [

"python",

"${path.module}/add-config.py",

"${local.config_file_name}",

"${local.config_params}"

]

}

Even though that script properly implemented the external data source protocol, for some reason, Terraform seemed to be trying to read an output from that data source too early. The reason turned out to be another Terraform bug.

I tried to work around this issue by adding a depends_on clause to force the external data source to wait, and ran terraform apply yet again…

Error #6: depends_on doesn’t work with data sources

Unfortunately, this didn’t work either, due to, drumroll please… another Terraform bug! I was starting to get a little frustrated. It turns out that data sources don’t work properly with depends_on.

So, it was time for some more refactoring, this time to replace the external data source with a null_resource with a local-exec provisioner:

resource "null_resource" "add_config" {

provisioner "local-exec" {

command = "python ${path.module}/add-config.py ${local.config_file_name} ${local.config_params}"

}

}

Surely terraform apply would work this time?

Error #7: whitespace

Nope. The code was now failing with a new crazy error message (which I unfortunately didn’t copy down) when trying to execute my script. I knew the script worked fine (I could run it directly without issues), so it had to be something with how I was calling it from Terraform.

After a bunch more digging, I found that the local-exec provisioner was not handling whitespace correctly in the arguments I was passing to my script. As a result, I had to base64encode the arguments in my Terraform code and update the script to base 64 decode them before using them:

resource "null_resource" "add_config" {

provisioner "local-exec" {

command = "python ${path.module}/add-config.py ${local.config_file_name} ${base64encode(local.config_params)}"

}

}

That meant it was time for yet another run of terraform apply. Please oh please oh please oh please just work.

Error #8: timeout

My hotel WiFi decided to strike again. But this time, the problem wasn’t a disconnect, but a lack of bandwidth:

It turns out that Terraform has—gasp—another open bug where there is a hard-coded timeout of 1 minute for uploading the deployment package for a Lambda function. With my fast Internet connection at home, I never hit this limitation before, but with the slow and flaky WiFi at my hotel, I could never get the ~30MB deployment package for this app uploaded in time.

The workaround for this issue required a little creativity:

- I fired up an EC2 Instance in our AWS account.

- I gave it an IAM Role with the permissions it would need to deploy the app.

- I SSHed to the instance and used the

-Aflag to enable SSH agent forwarding. - Now, I could

git clonethe private repo for the app, and the SSH Agent on my local computer would be used for auth, without having to copy my SSH keys the EC2 Instance. - With the code checked out on the EC2 Instance, I ran

terraform apply, and, with the much faster Internet connection in the AWS account, I was able to get the deployment package uploaded before hitting the timeout!

I was ready to celebrate until…

Error #9: ResourceConflictException… again

AAAHHHHHHHH. Error #2 was back!

I had gone through this whole destroy and apply cycle for nothing! Searching around some more, I found this is—wait for it—a known bug in Terraform. Judging by the name, I figured the cause must be related to making too many concurrent modifications to the same Lambda resources, so I added depends_on clauses to the Lambda resources to ensure that they were created sequentially rather than in parallel (see this comment for details).

I ran terraform apply once more and it finally ran to completion! I thought I was done…

Error #10: Access to KMS is not allowed… again

But it turns out the beating had only begun. Checking the app’s logs once more, even though the new version of app was finally deployed, the same mysterious KMS error was still showing up:

I spent hours debugging this until, using the Secrets Manager UI, I finally figured out the real reason: the secret we were trying to read was encrypted with a KMS master key that we had deleted! Unfortunately, the Secrets Manager doesn’t give you any warning about this, or even a clear error message, even though the secret is now completely unreadable!

Moreover, you can’t delete secrets immediately in Secrets Manager either—you can only mark them for deletion so they get cleaned up after several days—so I had to create a secret with a different name and tell our app to use this new name instead. Fortunately, the name of the secret is exposed through the app’s Terraform configuration, so all I needed to do was tweak one variable and re-run terraform apply. That shouldn’t take more than a minute or two…

Error #11: ResourceConflictException… yet again

OMGWTFBBQ. The ResourceConflictException was back yet again to torment me a 3rd time!

Despite my workaround with depends_on, this error was back, and back for good. Nothing I did seemed to fix it.

I had to resort to one final workaround: set -parallelism=1 when running terraform apply. This meant that all the resources would be created sequentially, with absolutely no concurrency whatsoever. It fixed the bug, though it made app deployment significantly slower… But at least it deployed, right?

Error #12: environment variables

Wrong. Somehow, this new deployment revealed yet another bug. In the Terraform configuration, we pass the name of the secret, as well as many other configs, to our Lambda function using environment variables. When we renamed the variable, we picked a slightly longer name that just happened to push us over the 4KB limit on environment variables for Lambda.

module "app" {

source = "git::git@github.com:gruntwork-io/package-lambda.git//modules/lambda?ref=v0.3.0"

name = "my-app"

description = "An app deployed with AWS Lambda"

source_path = "${local.source_path}"

runtime = "nodejs"

handler = "index.handler"

timeout = 30

memory_size = 2048

environment_variables = {

# The new secret_name pushed us over the 4KB limit!

secret_name = "${local.secret_name}"

foo = "${local.foo}"

bar = "${local.bar}"

}

}

We could pick a shorter name for the secret, but any minor change to any of the other configs in the future would likely push us over the 4KB limit again, so we need to find a better solution. It was time to get creative again:

- In our Terraform code, I put all the variables I wanted to pass to our Lambda function into a local variable called

config_map, which, as the name implies, is a map of all the configs we needed. - I used jsonencode to convert that map to a JSON string.

- I then used base64gzip to gzip that string, reducing its size by ~50%.

- I passed that compressed string as a single environment variable to the app.

- In the app code, I repeated this process in reverse, base 64 decoding, ungzipping, and parsing the JSON to get back the config data.

The Terraform code looks something like this:

module "app" {

source = "git::git@github.com:gruntwork-io/package-lambda.git//modules/lambda?ref=v0.3.0"

name = "my-app"

description = "An app deployed with AWS Lambda"

source_path = "${local.source_path}"

runtime = "nodejs"

handler = "index.handler"

timeout = 30

memory_size = 2048

# Crazy hack to get the environment variables to fit

environment_variables = {

config = "${base64gzip(jsonencode(local.config_map))}"

}

}

And finally… it was all working again!

Live long and yak shave

After this horrendous experience, I finally had the app working. What should’ve been a 5 minute fix took days. And at the end of it, I honestly could not remember why. This seems to be common with yak shaving: at the end of this process, you know you had spent a ton of time on *something—*something that leaves you frustrated and exhausted—but the tasks are so random and bizarre, that you typically can’t articulate it.

Therefore, I went back to my commit log, reconstructed all my steps (or at least all the ones I could figure out), and turned them into this blog post. And now that I’ve shared it with you, the next time your boss asks you why a DevOps project took so long, even if you can’t articulate the exact yaks you were shaving, you can point them here.

Get your DevOps superpowers at Gruntwork.io.

- No-nonsense DevOps insights

- Expert guidance

- Latest trends on IaC, automation, and DevOps

- Real-world best practices