Sometime late in 2025, Large Language Models (LLMs) reached a quality threshold for producing and writing code, making AI coding assistants extremely practical and useful. More and more engineers report using tools such as Claude Code daily, with many going so far as to not even viewing the code these tools produce.

Software development is the fastest area to adopt these tools and practices, with engineers producing code at an unthinkable velocity compared to a few years ago. Operations and infrastructure management need to match the pace to survive this new era. The problem is that agentic tools introduce unpredictability into production systems, while the whole industry has spent the past 20 years making operations as deterministic as possible. We already see the first mentions of catastrophic consequences of letting these tools loose on production systems.

To balance this newly introduced non-determinism, we now more than ever have to rely on robust platform engineering principles, such as Infrastructure as Code (IaC). IaC can help ensure consistency across environments and security requirements, and enable effective scaling as demand grows.

This blog explores how to effectively set up and use tools like Claude Code with Terraform, OpenTofu, and Terragrunt without handing the keys to your cloud environments to an AI agent.

💡 The optimal configuration of AI assistant tools depends heavily on your needs and setup, so use this blog as inspiration and a starting point rather than as prescriptive guidance.

How infrastructure code is different from application code

Most guidance on using AI coding tools assumes you’re writing application code with languages such as Python or TypeScript. The difference is that when you get something wrong in Terraform, you might lose a production database if you aren’t careful. That difference matters. Infrastructure code maps to real resources, and a refactor that renames a resource could trigger a destroy and recreate sequence.

Potential IaC drift adds another layer to consider. The code in your repo may not match what’s actually running in production. AI assistants don’t inherently know that. They edit your Terraform code assuming it reflects reality, and sometimes it might not.

The mental model

How should you be thinking about using these tools with IaC then? Not using them is no longer an option. The velocity gains are too significant to ignore. Three principles before we get into specifics:

- Agents should never deploy directly: Every infrastructure change flows through pull requests, CI, and deployment pipelines. The agent writes code. Humans and pipelines handle the rest. The agent produces diffs, not deployments.

- Least privilege by default. Agents receive only the credentials and tools they need. Short-lived read-only tokens injected at runtime.

- Everything is logged. Every tool call, credential request, and decision point has a trail that can be inspected.

Human in the loop

Following our mental model:

- The agent writes code according to the project specifics, guidelines, and our instructions.

- Humans review and approve the changes with PR-based flows.

- Pipelines deploy it once approved and merged.

Pull requests are the control surface for AI-generated infrastructure changes, which go through the same PR workflow as human-written code. Follow the policies that align with your organization’s needs for deployment triggers. For some orgs, a merge to main is the deployment trigger; other organizations prefer having an explicit approval for production deployments.

What typically goes right: the agent handles boilerplate, creating and connecting variables, dependency blocks, and documentation.

💡 Where you should pay attention: the agent could reference module versions that don’t exist, invent outputs that aren’t exposed by the dependency, or miss required tags. It could also try to write new modules from scratch when it should be reusing existing ones. It might invent CLI arguments and API parameters that don’t exist. That’s why steering markdown documents and skills are important. Always verify your tools’ output.

The permission model

Claude Code offers various levels of soft and hard controls for security and permissions that we will cover in this section. Think about them complementing each other and how you can stack them effectively on top of each other.

💡 Ultimately, the only thing that guarantees an agent can’t destroy your infrastructure is making sure it never has the permissions and credentials to do so. Start from the assumption that the agent will, at some point, attempt a destructive operation without asking. If it doesn’t have write credentials when that happens, nothing breaks. The rest help with velocity and developer experience, but they can fail. Prompt instructions get ignored. Deny rules get worked around.

The default posture should be read-only for actual production systems. The AI can read your Terraform code, suggest changes, and generate code and modules. It should not have permissions to apply anything.

Here are six layers of controls that you can stack with Claude Code. Adjust each layer according to your organizational needs for maximum security and efficiency:

Layer 1: Credential isolation & least privilege

Before exploring different Claude Code-specific controls, it’s worth noting that having a robust process for credential isolation and least privilege for agentic tooling should be the top priority. This layer can help you avoid unintended consequences if an agent is misconfigured or isn’t steered correctly. If the agent has no write credentials, it cannot destroy your infrastructure, no matter what it tries.

Common anti-patterns:

- Long-lived AWS credentials in ~/.aws/credentials on the machine where the agent runs,

- Long-lived IAM user access keys in environment variables.

- Shared credential profiles between human and agent sessions.

- Credentials shared with agents that aren’t scoped down to specific use cases.

What to do instead:

- Use short-lived, read-only credentials scoped to what the agent needs.

- Store secrets in a secrets manager (AWS Secrets Manager, HashiCorp Vault).

- Give the agent a separate, scoped-down role with read-only permissions to live environments.

The agent should be able to edit manifests, validate its work, and plan if needed, but it should not be able to edit live production systems either via Terraform or Cloud APIs.

Layer 2: Claude Code Permission modes

At the time of writing this, Claude Code has six permission modes that set the baseline autonomy level:

You can cycle between modes with `Shift+Tab` in the CLI. The overall guideline here is to select modes with more control for sensitive work, or fewer interruptions when you trust the direction.

For IaC work, start with default or plan mode. Claude analyzes and proposes, and you approve its plan. Set it in your project settings:

// .claude/settings.json

{

"permissions": {

"defaultMode": "plan"

}

}

Or start a session with plan mode:

claude --permission-mode planAn example flow:

- Claude reads codebase, analyzes state, creates a proposal.

- You review the plan and ask follow-up questions/demands.

- At the approval prompt you choose:

• Keep planning and iterate on the proposal.

• Approve and accept edits. Claude edits files, still asks for commands. - Once you are happy with the changes, you follow your normal CI/CD pipelines for deployment.

💡 Check out also the new Ultraplan mode when you want to customize specific sections of a plan, ask for revisions, and then choose where to execute it.

Layer 3: Allow / Ask / Deny rules

Claude Code’s permission system evaluates rules in order: deny first, then ask, then allow. The first match wins. You can view and manage Claude Code’s tool permissions with /permissions.

The exact commands that you deny vs allow vs prompt for asking will depend on your workflow, as there are edge cases hard to match with glob patterns.

For infrastructure repos, deny the dangerous commands explicitly. Here’s an example:

// .claude/settings.json

{

"permissions": {

"defaultMode": "plan",

"deny": [

"Bash(terraform apply *)",

"Bash(terraform destroy *)",

"Bash(terraform state rm *)",

"Bash(terraform state push *)",

"Bash(terraform state replace-provider *)",

"Bash(terraform force-unlock *)",

"Bash(terraform taint *)",

"Bash(terraform untaint *)",

"Bash(tofu apply *)",

"Bash(tofu destroy *)",

"Bash(tofu state rm *)",

"Bash(tofu state push *)",

"Bash(tofu state replace-provider *)",

"Bash(tofu force-unlock *)",

"Bash(tofu taint *)",

"Bash(tofu untaint *)",

"Bash(terragrunt apply *)",

"Bash(terragrunt destroy *)",

"Bash(terragrunt run-all apply *)",

"Bash(terragrunt run-all destroy *)",

"Bash(terragrunt run --all apply *)",

"Bash(terragrunt run --all destroy *)",

"Bash(terragrunt stack run apply *)",

"Bash(terragrunt stack run destroy *)",

"Bash(terragrunt state rm *)",

"Bash(terragrunt state push *)",

"Bash(terragrunt state replace-provider *)",

"Bash(terragrunt force-unlock *)",

"Bash(terragrunt taint *)",

"Bash(terragrunt untaint *)",

"Bash(terragrunt backend delete *)",

"Bash(rm -rf *)"

],

"ask": [

"Bash(terraform plan *)",

"Bash(terraform state mv *)",

"Bash(terraform import *)",

"Bash(tofu plan *)",

"Bash(tofu state mv *)",

"Bash(tofu import *)",

"Bash(terragrunt plan *)",

"Bash(terragrunt run-all plan *)",

"Bash(terragrunt run --all plan *)",

"Bash(terragrunt stack run plan *)",

"Bash(terragrunt state mv *)",

"Bash(terragrunt import *)",

"Edit(live/staging/**)",

"Edit(live/prod/**)",

"Write(live/prod/**)",

"Read(./.env)",

"Read(./.env.*)",

"Read(**/.tfstate)",

"Read(**/.terraform/**)"

"Read(**/.terragrunt-cache/**)"

],

"allow": [

"Bash(terraform fmt *)",

"Bash(terraform validate *)",

"Bash(tofu fmt *)",

"Bash(tofu validate *)",

"Bash(tflint *)",

"Bash(checkov *)",

"Bash(terragrunt hclfmt *)",

"Bash(terragrunt hcl format *)",

"Bash(terragrunt hclvalidate *)",

"Bash(terragrunt hcl validate *)",

"Bash(terragrunt validate-inputs *)",

"Bash(terragrunt render *)",

"Bash(terragrunt render-json *)",

"Bash(terragrunt graph-dependencies *)",

"Read(modules/**)",

"Read(live/**/*.hcl)",

"Edit(modules/**)"

]

}

}

A word of caution here. Deny rules are necessary but might not always be sufficient. An agent could wrap terraform apply in a bash script and execute the script instead. The deny rule might miss that. For example, `Read()` deny rules only block Claude’s built-in `Read` tool. They don’t block `cat ~/.aws/credentials via Bash. To block all file access, including subprocess reads, use the Sandboxing (Layer 5) or a `PreToolUse` hook (Layer 4) that inspects commands.

💡 This is why credential isolation and sandboxing are the real security boundaries. Deny rules reduce noise and keep the agent focused on what it should be doing. They don’t guarantee 100%, it can’t do what it shouldn’t.

Layer 4: CLAUDE.md and Path-Scoped Rules

CLAUDE.md are markdown files that give Claude persistent instructions for a project. This is where you set expectations for the agent to read at the start of every session. It’s one of the softest layers, but it shapes how the agent approaches your codebase.

CLAUDE.md files can live in several locations, each with a different scope. Start with ./CLAUDE.md or ./.claude/CLAUDE.md at your project level for team-shared instructions that can be shared and should be committed to git.

Here’s an example of how you can configure your CLAUDE.md for Terraform:

# CLAUDE.md — Terraform Project Guidelines

## Infrastructure-as-Code Project Context

This is an AWS infrastructure monorepo managed with Terragrunt.

All modules live in `modules/`. All live configurations live in `live/`.

## Rules

- Never run `terraform apply`, `terraform destroy`, `-auto-approve`, or `terragrunt apply`. All deployment changes go through PR workflows.

- Never modify files in `live/prod/` without explicit instructions.

- Always reference existing modules in `modules/` before writing new ones.

- All resources MUST include tags: Environment, Team, ManagedBy.

- Use the naming convention: {env}-{service}-{resource}

- Always run `terraform validate` after editing any .tf file

- Never store secrets in .tf files. use Secrets Manager, Vault, or SSM Parameter Store

### Safe Commands (auto-allowed)

```

terraform fmt

terraform validate

terragrunt hcl format

terragrunt hclfmt

terragrunt hclvalidate

terragrunt validate-inputs

terragrunt graph-dependencies

terragrunt render-json

terragrunt render

tflint

checkov

```

## Repository structure

- `terraform/modules/` — Reusable modules (never deploy directly)

- `terraform/live/{dev,staging,prod}/` — Environment-specific configs

- `terraform/live/*/backend.tf` — State backend config (DO NOT MODIFY)

- `terraform/live/*/versions.tf` — Provider pins (discuss before changing)

- `.claude/settings.json` — Claude Code permissions, sandbox, and hooks

- `.claude/hooks/validate-tf.sh` — Post-edit validation hook

live/

├── dev/

├── staging/

└── prod/

modules/

├── vpc/

├── ecs-service/

└── rds/

## Terraform Conventions

- Terraform >= 1.6, provider versions pinned in `versions.tf`

- All resources tagged: `Environment`, `Team`, `ManagedBy = "terraform"`

- Use `for_each` over `count` for named resources

- Use `data "aws_iam_policy_document"` over inline JSON

- `lifecycle { prevent_destroy = true }` on all stateful resources

- No provider blocks inside modules. Inherit from root

## Workflow (Terraform)

1. `terraform init` — if needed for project initiatilization

2. `terraform validate` — check syntax

3. `terraform fmt` — normalize formatting

4. `tfsec .` / `checkov -d .` — security scan

5. `terraform plan -out=tfplan` — generate plan, present to user; deployment happens via the PR pipeline, never from the agent's shell.

## Workflow (Terragrunt)

1. `terragrunt hcl format` — normalize HCL (or `terragrunt hclfmt` on <1.0)

2. `terragrunt hcl validate` — check terragrunt.hcl / root.hcl syntax

3. `terragrunt validate-inputs` — confirm inputs match the underlying module

4. `terragrunt validate` — delegated `terraform validate` inside the unit

5. `terragrunt run plan -out=tfplan` — generate plan, present to user; deployment happens via the PR pipeline, never from the agent's shell.

## What Requires Human Approval

- IAM role/policy changes

- Security group modifications

- VPC/subnet/routing changes

- Any change to `terraform/live/prod/`

- Provider version upgrades

- Backend configuration changes

## Security Requirements

- S3 buckets: `server_side_encryption_configuration` + `versioning` + `block_public_access`

- IAM: least-privilege, `description` on all roles, no `*` actions without justification

- Security groups: no `0.0.0.0/0` ingress on 22/3389

- RDS: `storage_encrypted = true`, `deletion_protection = true`

- EKS: `endpoint_public_access = false`

- Flag any `0.0.0.0/0` or `*` wildcard as a review itemPath-Scoped Rules

You can organize rules and instructions into multiple files using the .claude/rules/ directory. This is extremely useful in cases where you when to apply rules to specific file paths or types. For example, these rules apply to all .tf and .tfvars files:

// .claude/rules/terraform.md

---

paths:

- "**/*.tf"

- "**/*.tfvars"

---

# Terraform File Rules

- Run `terraform fmt` after every edit

- All variables: `description` + `type` required

- All outputs: `description` required

- Sensitive variables: `sensitive = true`

- No hardcoded account IDs, regions, or ARNs. Use variables/data sources

- Prefer `data "aws_iam_policy_document"` over inline JSON

- Never use `ignore_changes = all`. Be explicitLayer 5: Hooks

Hooks are shell commands, HTTP endpoints, or LLM prompts that fire at specific lifecycle points. They have the benefit of being deterministic. They offer a more reliable mechanism for governance than CLAUDE.md instructions. Check this guide for setting up hooks for the first time and here’s the full list of events that you can use.

For IaC purposes, here are a few use cases for hooks for inspiration:

Here’s an example of a PostToolUse hook that automatically runs `terraform validate` to ensure the Terraform configuration is valid after changes to .tf files. Register the hook in .claude/settings.json that runs the script before any Edit or Write tool call:

// part of .claude/settings.json

{

"hooks": {

"PostToolUse": [

{

"matcher": "Edit|Write",

"hooks": [

{

"type": "command",

"command": "\"$CLAUDE_PROJECT_DIR\"/.claude/hooks/validate-tf.sh"

}

]

}

]

}

}

And here’s the script we want to trigger:

# .claude/hooks/validate-tf.sh

#!/bin/bash

set -euo pipefail

INPUT=$(cat)

FILE_PATH=$(echo "$INPUT" | jq -r '.tool_input.file_path // empty')

# Only trigger on .tf files

[[ "$FILE_PATH" != *.tf ]] && exit 0

TF_DIR=$(dirname "$FILE_PATH")

# Ensure terraform is available

if ! command -v terraform &>/dev/null; then

jq -n '{decision: "block", reason: "terraform not found on PATH — cannot validate .tf files."}'

exit 0

fi

# Ensure terraform has been initialized

if [[ ! -d "$TF_DIR/.terraform" ]]; then

jq -n --arg dir "$TF_DIR" '{decision: "block", reason: "terraform init has not been run in \($dir). Run terraform init before editing .tf files."}'

exit 0

fi

# Run terraform validate

RESULT=$(terraform -chdir="$TF_DIR" validate -json 2>&1 || true)

VALID=$(echo "$RESULT" | jq -r '.valid // false' 2>/dev/null || echo "false")

if [[ "$VALID" != "true" ]]; then

ERRORS=$(echo "$RESULT" | jq -r '.diagnostics[]? | "\(.severity): \(.summary) - \(.detail)"' 2>/dev/null || echo "terraform validate failed (could not parse output)")

jq -n --arg reason "terraform validate FAILED after editing $FILE_PATH:\n$ERRORS\n\nFix these errors before proceeding." '{

decision: "block",

reason: $reason

}'

exit 0

fi

exit 0To test this works, ask Claude Code to introduce an invalid change to a .tf file. Here, since we are trying to introduce an invalid `totally_fake_attribute`, the hook will block this edit:

Update(main.tf)

⎿ Added 1 line

16 resource "aws_instance" "app_server" {

17 ami = data.aws_ami.ubuntu.id

18 instance_type = "t2.micro"

19 + totally_fake_attribute = "this_does_not_exist"

20

21 tags = {

22 Name = "learn-terraform"

⎿ PostToolUse:Edit hook returned blocking error ⎿ terraform validate FAILED after editing

main.tf:\nerror: Unsupported argument - An argument named "totally_fake_attribute" is not expected here.\n\nFix these errors before proceeding.

💡 Other ideas that could be useful to configure as hooks:

• A PreToolUse hook that blocks destructive Terraform commands (e.g. `terraform destroy`, `terraform state rm` ).

• A PostToolUse hook that runs tflint and checkov on any Terraform file the agent edits.

• Protect critical files from edits (e.g. state files, backend files, Claude settings).

• Audit logging for compliance when Claude settings files change.

Hooks are just one layer in the stack. They don’t replace code review or CI checks. But they can catch problems early.

Layer 6: Sandboxing

Claude Code’s sandbox settings restrict filesystem access and network isolation at the OS level, applying to all subprocess commands.

Sandboxing is critical for IaC workflows because it blocks agents from destructive operations even if they try to perform them. Human-in-the-loop flows could also work instead, but we quickly get into the “approval fatigue” zone with constant interruptions.

Sandboxing defines clear boundaries on which directories and network hosts Claude Code can access and which commands aren’t allowed, enabling Claude Code to run more independently within defined limits.

💡 Sandboxing and permissions (from layers 1 and 2) are complementary security layers that work together. Permissions control which tools Claude Code can use and sandboxing provides the extra OS-level enforcement that restricts what these commands can access at the filesystem and network level.

You can enable sandboxing by running the `/sandbox` command. There are two sandbox modes:

- Auto-allow mode: Explicit deny rules are always respected here. Bash commands that can run inside the sandbox are automatically allowed. If a command can’t be sandboxed (network call to non-allowed host), it falls back to regular permission flow.

- Regular permissions mode: All commands still go through the standard permission flow.

Here’s an example configuration for sandboxing that denies commands that write and read to specific paths and controls which domains Bash commands can reach:

// part of .claude/settings.json

"sandbox": {

"enabled": true,

"failIfUnavailable": true,

"autoAllowBashIfSandboxed": false,

"allowUnsandboxedCommands": false,

"filesystem": {

"denyWrite": [

"/etc",

"/usr",

"~/.bashrc",

"~/.zshrc",

"~/.aws/credentials",

"~/.ssh"

],

"denyRead": [

"~/.aws/credentials",

"~/.ssh/id_rsa"

]

},

"network": {

"allowedDomains": [

"api.github.com"

]

}

}

Here you can find detailed explanations for all sandbox settings.

Some things to consider for sandboxing:

- Begin with restrictive and minimal permissions and expand as needed.

- Review sandbox violation attempts to understand what’s happening.

- You can define different sandbox rules for development vs. production contexts.

- Use sandboxing alongside policies and permissions for comprehensive security.

- Test and verify your sandbox settings don’t block legitimate workflows.

Dev containers are another option that provides strong isolation. The agent runs in a container with only the tools and access you define, and its enhanced security measures (isolation and firewall rules) allow you to feel safer when bypassing permission prompts for unattended operations on IaC flows.

Development containers can be useful for isolating different customer environments, creating preconfigured development environments for new team members, and setting up consistent CI/CD environments for IaC workflows. Check out how to get started with Dev Containers.

Understand Context Management

An important concept to understand is context management. Claude Code operates within a context window that fills as you work, with each file read, command output, and conversation message consuming tokens. When context fills up, Claude Code automatically compresses older messages, but that compression means that you might lose important information.

What loads automatically at startup:

- Your CLAUDE.md files (project root + parent directories)

- Auto memory (MEMORY.md)

- System prompt, MCP tool names, and skill descriptions.

Key commands to know:

/compact- manually summarize the conversation to free up space. You can guide what to preserve e.g. /compact focus on the EKS module refactor./clear- reset context entirely. 💡Use this between unrelated tasks./context- see a live breakdown of what's consuming tokens, with optimization suggestions.

Practical tips:

- Clear often. Starting a new task? Hit /clear. Context pollution from a previous failed approach is a common source of inaccurate responses.

- Keep CLAUDE.md lean. Only include what Claude can't infer from the code itself, such as build commands, testing conventions, and repo gotchas.

- Use subagents for heavy exploration. When Claude needs to search across many files, subagents run in their own context window. Your main session stays clean and you only get back the subagent’s results.

- Scope your requests narrowly to keep things focused.

Customize and Extend Claude Code

Claude Code offers various options for customization to make your IaC generation targeted and suited to your style, needs, organizational preferences, and policies.

CLAUDE.md

As discussed previously during the permission layer discussion, CLAUDE.md is a special file that Claude reads at the start of every conversation. Include Bash commands, code style, and workflow rules. This gives Claude a persistent context that it can’t infer from code alone.

CLAUDE.md is loaded every session, so only include things that apply broadly at this level. For domain-specific knowledge or flows, we will look later into skills that are loaded on demand. Check the Layer 3 section in the Permission Models for a full CLAUDE.md file example.

Commands

Commands provide built-in controls for common operations such as managing permissions, context, and switching permission modesI di or models. You can type `/` to see available commands.

Here are some example commands that could be useful when writing IaC with Terraform:

/diff- Interactive diff viewer to review exactly what Claude changed in your Terraform files before committing./compact- Free up context in long sessions. Useful when iterating on large module refactors./security-review- Analyze pending branch changes for security vulnerabilities in your infra code./simplify- Review changed Terraform code for quality, reuse, and efficiency./rewind- Undo Claude's changes if a Terraform edit went wrong and roll back to a previous state./memory- Persist Terraform conventions across sessions as you optimize your usage.

💡 Two relatively new commands that can be useful to level up your game with Claude Code:

•/powerup- An interactive feature discovery tool. It presents quick lessons with demos to help you learn Claude Code capabilities you might not know about (like hooks, custom skills, MCP servers, etc.).

•/insights- Analyzes your past Claude Code sessions and generates a usage report. It shows you what projects you've worked on, what's working well, where friction happened, and gives personalized suggestions to improve your workflow.

Skills and context

Agent Skills are markdown files that the agent reads and follows. They’re the best practices layer with modular capabilities that extend Claude's functionality. Skills are reusable, filesystem-based resources that provide Claude with domain-specific expertise: workflows, context, and best practices that transform general-purpose agents into specialists.

Skills load on demand and help solve the need to repeatedly provide the same guidance across multiple conversations.

💡 A caveat here is that agents may or may not follow skills consistently. They’re not deterministic like hooks. Think of them as coaching, not explicit enforcement.

An example skills directory for an IaC project:

.claude/skills/

├── terraform-plan/

│ └── SKILL.md # Plan dependencies before writing HCL

├── ship/

│ └── SKILL.md # Git commit and push guidance

You can create your own custom skills or use skills that others have created. For example, here’s a `ship` custom skill that Claude Code created for me, used for committing and pushing code to GitHub so I don't have to type the commands or explain what I want every time:

// .claude/skills/ship/SKILL.md

---

name: ship

description: Stage, commit, and push changes to the current branch safely.

disable-model-invocation: false

user-invocable: true

argument-hint: "[commit-message]"

allowed-tools: Bash(git status *) Bash(git diff *) Bash(git add *) Bash(git commit *) Bash(git push *) Bash(git log *)

---

# Commit and Push

## Steps

1. Run `git status` to show the branch and changed files

2. Run `git diff` to review what will be committed

3. Ask the user for confirmation before proceeding

4. Commit: Stage the relevant changed files (never stage secrets, `.env`, or unrelated files). Write a concise commit message that describes the changes. If the user provided a message via `$ARGUMENTS`, use that as the commit message. Always append the co-author trailer:

```

Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

```

5. Push: Push to the current branch on origin.

6. Report: Show the user the commit hash, the branch pushed

## Rules

- Never use `git add -A` or `git add .` — stage specific files only.

- Never commit files that look like secrets (`.env`, credentials, tokens).

- Never force push

- Never use --no-verify

- If no commit message is provided, ask the user for one

- If on main/master, warn the user before pushingIt can be invoked by typing /ship or by asking Claude Code to use the ship skill to commit and push specific files to GitHub.

💡 Here’s a community-built Terraform skill that could be quite useful. Consider adjusting this to your workflow: terraform-skill.

MCP Servers

The Model Context Protocol (MCP) allows extension of agentic AI tools with external data sources, APIs, and other tooling.

A few MCP servers that you could find interesting for productivity and IaC use cases:

- Atlassian Rovo

- AWS (full list of open source MCP Servers for AWS)

- Notion

- Slack

Here’s a guide on how to install MCP servers for Claude Code. You can install MCP at different levels: local, project, user.

Let’s set up the AWS MCP server as an example:

// .mcp.json at the project root

{

"mcpServers": {

"aws-mcp": {

"command": "uvx",

"timeout": 100000,

"transport": "stdio",

"args": [

"mcp-proxy-for-aws@latest",

"https://aws-mcp.us-east-1.api.aws/mcp",

"--metadata",

"AWS_REGION=us-west-2"

]

}

}

}

Next, we need the necessary AWS permissions to be able to use this MCP server.

💡 Note, the AWS MCP server exposes read and write AWS tools viaaws-mcp:CallReadWriteTool. As discussed, we don’t want to allow write operations, so we need to provide to Claude Code read-only permissions with an IAM policy that grants onlyaws-mcp:CallReadOnlyTool+aws-mcp:InvokeMcp:

// read-only-access-aws-mcp.json

{

"Version": "2012-10-17",

"Statement": [{

"Effect": "Allow",

"Action": [

"aws-mcp:InvokeMcp",

"aws-mcp:CallReadOnlyTool"

],

"Resource": "*"

}]

}

You can now use it with Claude Code to call read-only tools in the AWS MCP service. For example, listing EC2 instances in a specific region.

💡 Tool search keeps MCP context usage low by deferring tool definitions until Claude needs them to avoid having an impact on your context window.

Plugins

Plugins package skills, agents, hooks, and MCP servers into a single unit, allowing you to share custom functionality easily with projects and teams. You can discover and install prebuilt plugins through marketplaces, for example, the official Anthropic plugins repository.

A few plugins you might find useful for IaC work:

- Code Review - AI code review with specialized agents and confidence-based filtering for pull requests

- GitHub - Create issues, manage PRs, review code, search repos, and access GitHub's API from Claude Code.

- AWS Plugins - Best practices to architect, deploy, and operate on AWS.

Advanced Usage Options

- subagents for task-specific isolated context workflows. Subagents are specialized AI assistants that handle specific types of tasks. Use one when a side task can run independently, without polluting your context with its details. The subagent does that work in its own context and returns only the summary. Define a custom subagent when you keep spawning the same kind of worker with the same instructions. Each subagent runs in its own context window with a custom system prompt, specific tool access, and independent permissions.

💡 Here’s a subagent example you could take a look at: terraform-engineer. This could be used as a separate subagent when working in projects that Terraform development is only a part of the whole flow.

- agent teams for multiple independent sessions with shared tasks and peer-to-peer messaging. One session acts as the team coordinator/lead, assigning tasks, and synthesizing results. Teammates agents work independently, each in its own context window, and communicate directly with each other. Unlike subagents, which run within a single session and can only report back to the main agent, you can also interact with individual agent teammates directly without going through the lead.

- Non-interactive mode (claude -p "...") to run Claude headlessly in CI/CD. Example: claude -p "validate all Terraform files and check for security issues" in a pipeline.

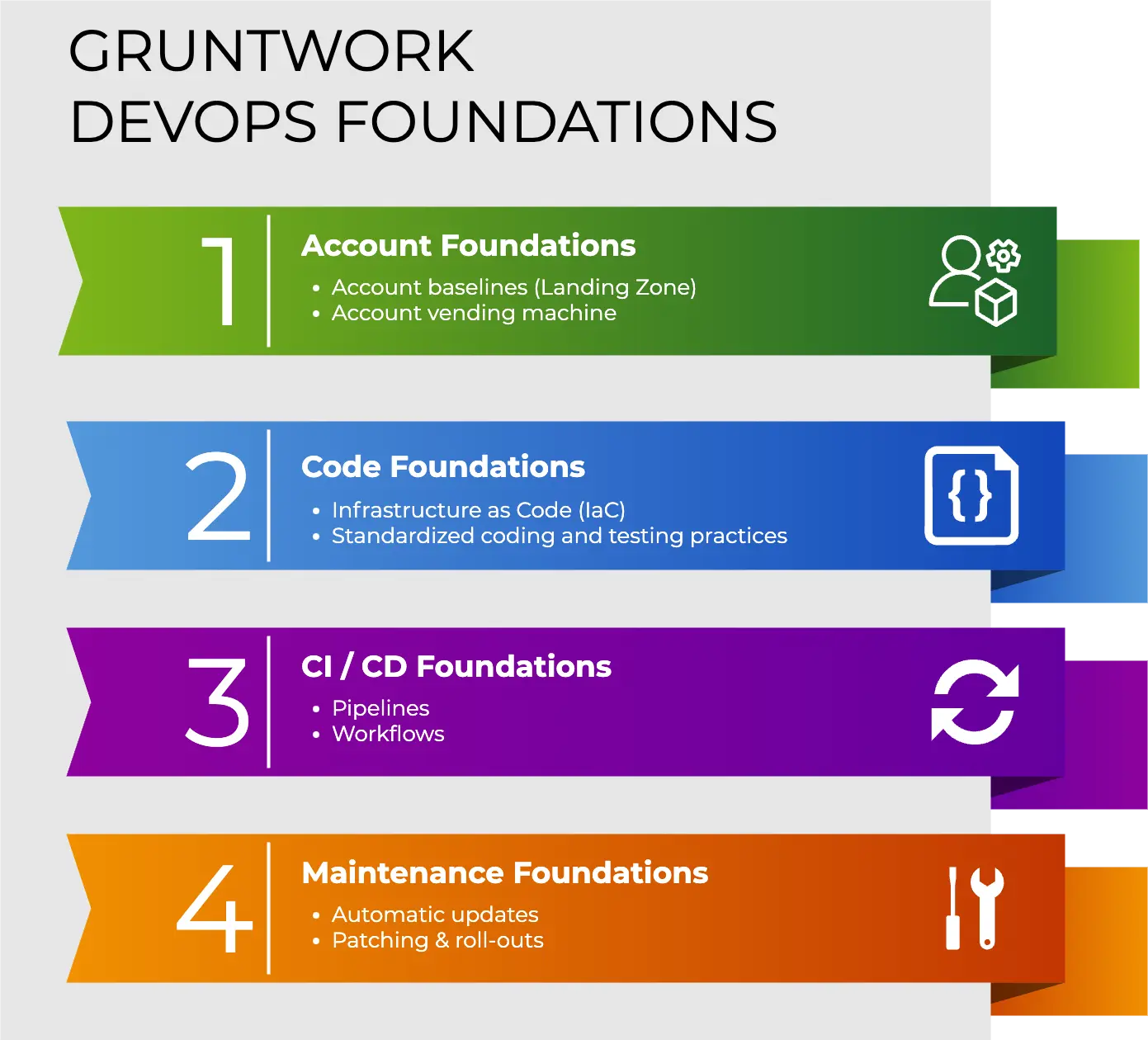

How Gruntwork can help

Gruntwork provides an opinionated, pre-built DevOps platform for OpenTofu and Terraform. They are also the makers of Terragrunt, OpenTofu, and Terratest. The Gruntwork ecosystem maps directly to robust platform engineering principles vital for effective agentic AI use.

Gruntwork released Terragrunt 1.0 in March 2026 with stacks, streamlined CLI, a filter mechanism, run reports, performance improvements, and backward compatibility guarantees.

Pre-built modules

Gruntwork’s IaC Library gives AI agents something validated to reference. Over 300 production-grade modules for AWS covering networking, compute, databases, and security. The agent references real, versioned modules instead of inventing its own from scratch.

Terragrunt for structure

Terragrunt’s conventions for keeping configurations DRY, managing remote state, and handling dependencies through a DAG give the agent a well-defined structure to work within. Less ambiguity in the codebase means fewer potential hallucinations in the output.

Pipelines as the enforcement layer

Gruntwork Pipelines is PR-driven CI/CD for Terraform and Terragrunt. They offer a minimal setup experience, followed by a very intuitive model for driving infrastructure updates. A plan runs automatically when you open a PR and is applied on merge. Even if an agent writes the code, pipelines enforce the workflow with human in the loop for merges.

Patcher for safe upgrades

When modules get updated, Patcher handles version bumps and breaking changes. This is work an AI could attempt, but would likely get wrong because it requires understanding version compatibility across your dependency graph.

Stacks for blast radius control

Terragrunt Stacks group infrastructure into logical, versioned units. For AI agents, this provides bounded reasoning and a way to scope their work within a stack without accidentally affecting unrelated infrastructure. For humans, working on separate stacks allows for producing plans and diffs that are scoped and a human can actually review.

Drift detection

Gruntwork Drift Detection catches when live infrastructure diverges from code, whether caused by a human or an AI. Agents reason from the code’s repo, so drift detection is critical so the agent's assumptions hold when suggesting edits. Once drift is detected, you can route it back through the same PR-driven pipeline.

Conclusion

AI coding assistants can make you faster at writing infrastructure code. The question is whether you can trust what they write. The answer is to build on top of solid platform engineering principles. AI amplifies your workflow. If that workflow has no guardrails, it amplifies that too.

Follow well-established security and platform engineering practices, combined with agentic guardrails, when using AI tooling, such as credential isolation, least privilege, sandboxing, hooks, permissions, policy checks, automated testing, and human reviews.

To get the most out of AI coding assistants, spend some time customizing their behavior according to your organizational needs. A great place to start is the Claude Code Best Practices. Look into steering files, CLAUDE.md, agent skills, connecting relevant tools via MCP, automating bash workflows with hooks and agent plugins.

Combine the power of AI coding assistants with robust IaC practices provided by Gruntwork to turbocharge your workflows. The Terragrunt Scale Free Tier is now generally available (GA), allowing you to set up world-class CI/CD for Terragrunt in minutes. Book a demo today!

- No-nonsense DevOps insights

- Expert guidance

- Latest trends on IaC, automation, and DevOps

- Real-world best practices